Linux内存管理:KSM内存共享机制

- 2026-03-23 20:09:16

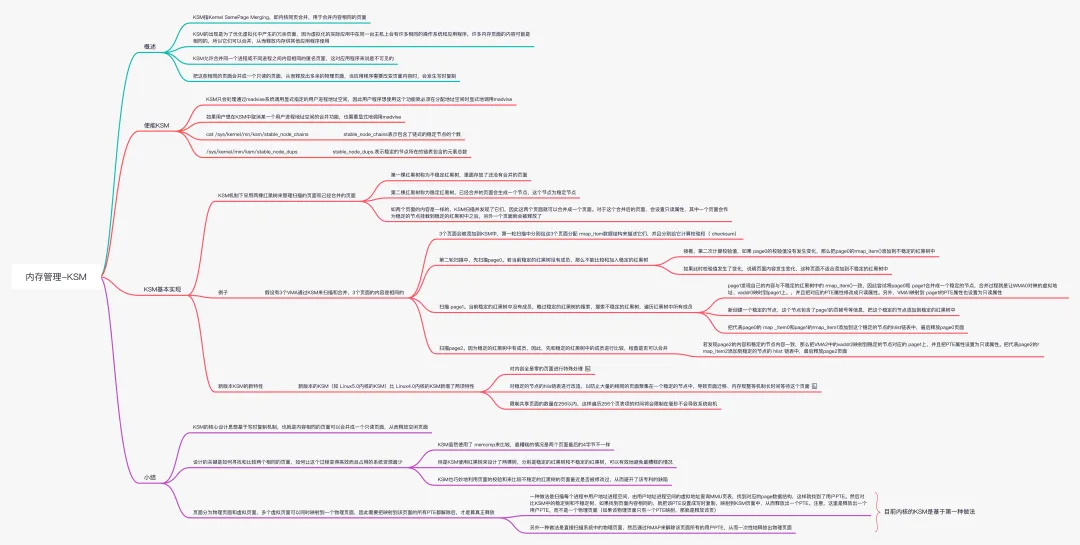

在虚拟化环境中,同一台主机上存在许多相同OS和应用程序,很多页面内容可能是完全相同的,因此可以被合并,从而释放内存供其它应用程序使用。

KSM允许合并同一个进程或不同进程之间内容相同的匿名页面,这对应用程序是不可见的。

把这些相同的页面很成一个只读页面,从而释放物理页面,当应用程序需要改变页面内容时,发生写时复制(Copy-On-Write)。

一、KSM的实现

KSM核心设计思想是基于写时复制机制COW,也就是将内容相同的页面合并成一个只读页面,从而释放出空闲物理页面。

KSM的实现可以分为两部分:一是启动内核线程ksmd,等待唤醒进行页面扫描和合并;二是madvise唤醒内核线程ksmd。

KSM只会处理通过madvise系统调用显式指定的用户进程地址空间内存,因此用户想使用此功能必须显式调用madvise(addr, length, MADV_MERGEABLE)。

用户想取消KSM中某个用户进程地址空间合并功能,也需要显式调用madvise(addr, length, MADV_UNMERGEABLE)。

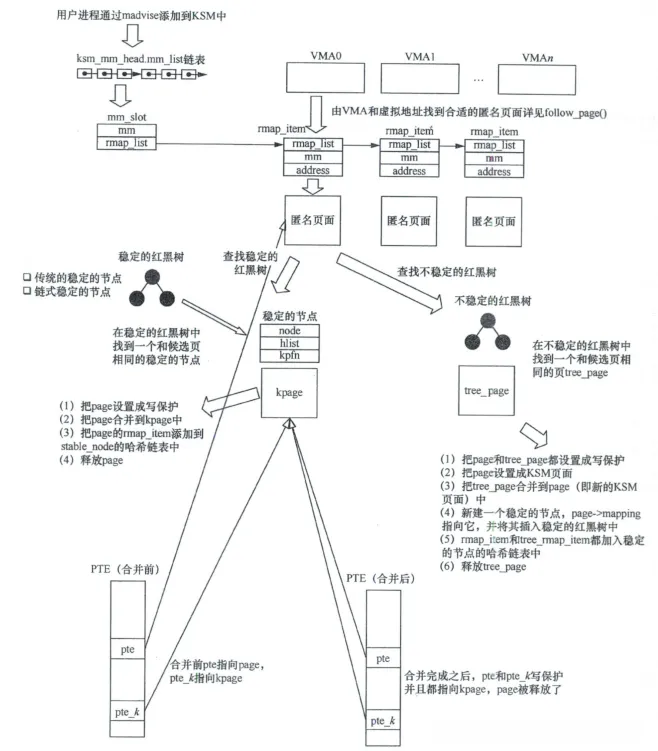

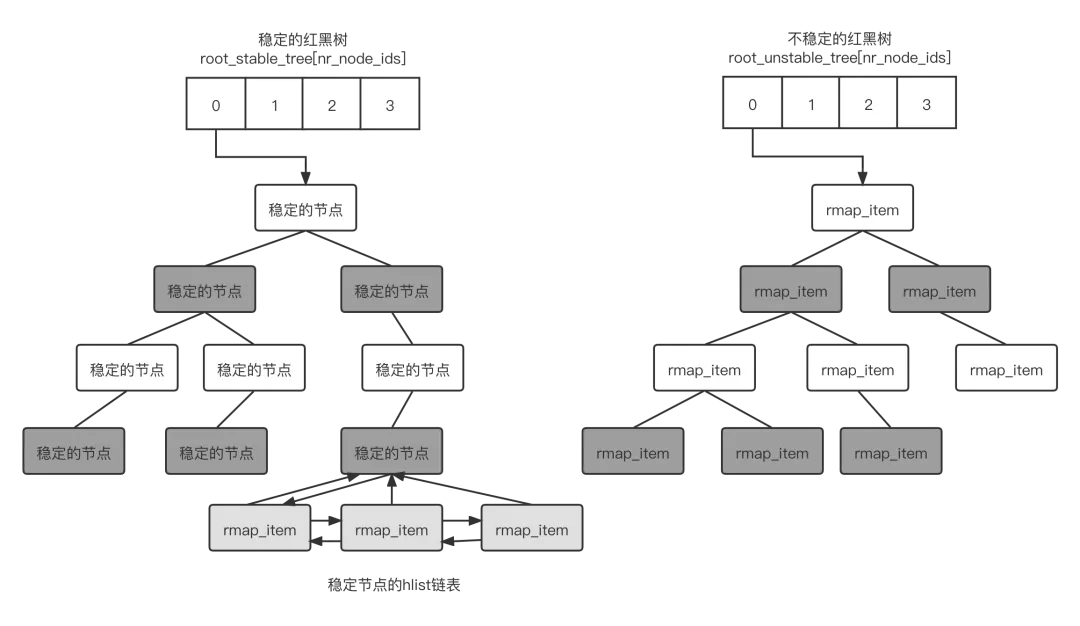

如两个页面的内容是一样的,KSM扫描并发现了它们,因此这两个页面就可以合并成一个页面。对于这个合并后的页面,会设置只读属性,其中一个页面会作为稳定的节点挂载到稳定的红黑树中之后,另外一个页面就会被释放了。但是这两个页面的 rmap_item数据结构会被添加到稳定节点中的 hist 链表中,如下图所示。

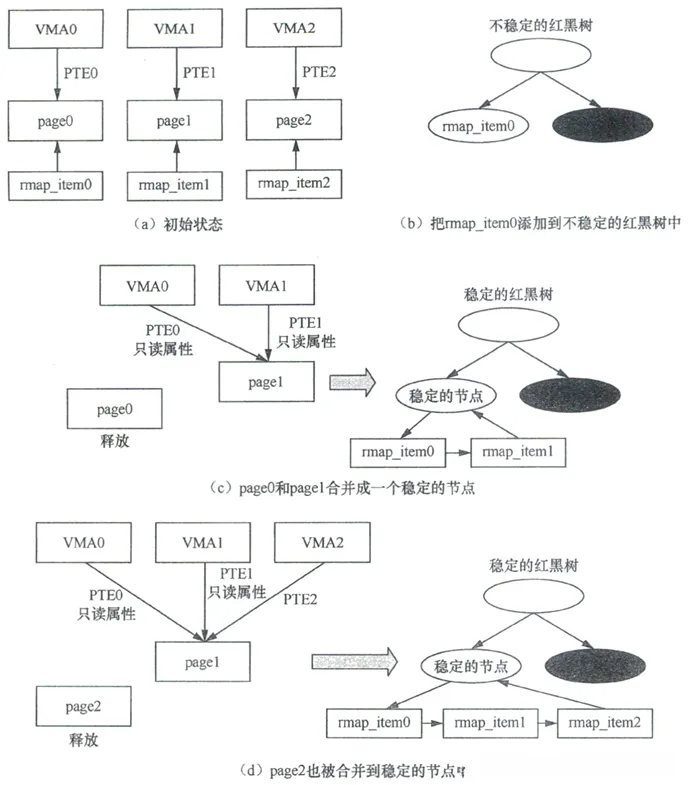

我们假设有3个VMA(表示进程地址空间,VMA的大小正好是一个页面的大小,分别有3个页面映射这3个VMA。这3个页面准备通过KSM来扫描和合并,这3个页面的内容是相同的。具体步骤如下。

3个页面会被添加到KSM中,第一轮扫描中分别给这3个页面分配 rmap_item数据结构来描述它们,并且分别给它计算校验和,如图(a)所示

第二轮扫描中,先扫描page0,若当前稳定的红黑树没有成员,那么不能比较和加入稳定的红黑树。接着,第二次计算校验值,如果 page0的校验值没有发生变化,那么把page0的rmap_item()添加到不稳定的红黑树中,如图(b)所示。如果此时校验值发生了变化,说明页面内容发生变化,这种页面不适合添加到不稳定的红黑树中

扫描 page1,当前稳定的红黑树中没有成员,略过稳定的红黑树的搜索。搜索不稳定的红黑树,遍历红黑树中所有成员。 page1发现自己的内容与不稳定的红黑树中的 rmap_item()一致,因此尝试将page0和 page1合并成一个稳定的节点,合并过程就是让WMA0对映的虚拟地址、vaddr0映时到page1上。,并且把对应的PTE属性修改成只读展性。另外,VMA1映射到 page1的PTE属性也设置为只读属性。新创建一个稳定的节点,这个节点包含了page1的页帧号等信息,把这个稳定的节点添加到稳定的红黑树中。把代表page0的 map _item0和page1的rmap_item1添加到这个稳定的节点的hlist链表中,最后释放page0页面,如图(c)所示

扫描page2。因为稳定的红黑树中有成员,因此,先和稳定的红黑树中的成员进行比较,检査是否可以合并。若发现page2的内容和稳定的节点内容一致,那么把VMA2中的vaddr2映射到稳定的节点对应的 page1上,并且把PTE属性设置为只读属性。把代表page2的rmap_item2添加到稳定的节点的 hlist 链表中,最后释放page2页面,如图(d)所示

KSM会合并什么样类型的页面?

一个典型的应用程序由以下5个内存部分组成:

可执行文件的内存映射(page cache)

程序分配使用的匿名页面

进程打开的文件映射

进程访问文件系统产生的cache

进程访问内核产生的内核buffer(如slab)等

KSM只考虑进程分配使用的匿名页面

如何去查找和比较两个相同的页面?

KSM巧妙使用红黑树设计了两棵树:stable树和unstable树。

KSM巧妙地利用页面的校验值来比较unstable树的页面最近是否被修改过。

如何节省内存?

页面分为物理页面和虚拟页面,多个虚拟页面同时映射到一个物理页面,因此需要把映射到该页所有PTE都解除后,才算是真正释放。

目前有两种做法:

一是扫描每个进程中的VMA,由VMA的虚拟地址查询MMU页表找到对应的page数据结构,进而找到用户pte。

然后对比KSM中的stable树中的stable树和unstable树,如果找到内容相同的页面,就把该pte设置成COW,映射到KSM页面中,从而释放出一个pte,这里只是释放出一个用户pte,而不是物理页面。

如果该物理页面只有一个pte映射,那就是释放该页。

二是直接扫描系统中的物理页面,然后通过反响映射来解除该页所有的用户pte,从而一次性释放出物理页面。

1.1 使能KSM功能

很多内核默认没有开启KSM功能,需要打开CONFIG_KSM=y才能打开。

通过make menuconfig配置如下:

Processor type and features[*]Enable KSM for page merging

1.2 KSM相关数据结构

KSM的核心数据结构有三个:struct rmap_item、struct mm_slot、struct ksm_scan。

欠一张三者之间的关系图。

rmap_item描述一个虚拟地址反向映射的条目。

/*** struct rmap_item - reverse mapping item for virtual addresses* @rmap_list: next rmap_item in mm_slot's singly-linked rmap_list* @anon_vma: pointer to anon_vma for this mm,address, when in stable tree* @nid: NUMA node id of unstable tree in which linked (may not match page)* @mm: the memory structure this rmap_item is pointing into* @address: the virtual address this rmap_item tracks (+ flags in low bits)* @oldchecksum: previous checksum of the page at that virtual address* @node: rb node of this rmap_item in the unstable tree* @head: pointer to stable_node heading this list in the stable tree* @hlist: link into hlist of rmap_items hanging off that stable_node*/struct rmap_item {struct rmap_item *rmap_list;-----------------------------------------所有rmap_item连接成一个链表,链表头在ksm_scam.rmap_list中。union {struct anon_vma *anon_vma; /* when stable */------------------当rmap_item加入stable树时,指向VMA的anon_vma数据结构。#ifdef CONFIG_NUMAint nid; /* when node of unstable tree */#endif};struct mm_struct *mm;------------------------------------------------进程的struct mm_struct数据结构unsigned long address; /* + low bits used for flags below */--rmap_item所跟踪的用户地址空间unsigned int oldchecksum; /* when unstable */---------------------虚拟地址对应的物理页面的旧校验值union {struct rb_node node; /* when node of unstable tree */---------rmap_item加入unstable红黑树的节点struct { /* when listed from stable tree */struct stable_node *head;------------------------------------加入stable红黑树的节点struct hlist_node hlist;-------------------------------------stable链表};};};

mm_slot描述添加到KSM系统中将来要被扫描的进程mm_struct数据结构。

/** A few notes about the KSM scanning process,* to make it easier to understand the data structures below:** In order to reduce excessive scanning, KSM sorts the memory pages by their* contents into a data structure that holds pointers to the pages' locations.** Since the contents of the pages may change at any moment, KSM cannot just* insert the pages into a normal sorted tree and expect it to find anything.* Therefore KSM uses two data structures - the stable and the unstable tree.** The stable tree holds pointers to all the merged pages (ksm pages), sorted* by their contents. Because each such page is write-protected, searching on* this tree is fully assured to be working (except when pages are unmapped),* and therefore this tree is called the stable tree.** In addition to the stable tree, KSM uses a second data structure called the* unstable tree: this tree holds pointers to pages which have been found to* be "unchanged for a period of time". The unstable tree sorts these pages* by their contents, but since they are not write-protected, KSM cannot rely* upon the unstable tree to work correctly - the unstable tree is liable to* be corrupted as its contents are modified, and so it is called unstable.** KSM solves this problem by several techniques:** 1) The unstable tree is flushed every time KSM completes scanning all* memory areas, and then the tree is rebuilt again from the beginning.* 2) KSM will only insert into the unstable tree, pages whose hash value* has not changed since the previous scan of all memory areas.* 3) The unstable tree is a RedBlack Tree - so its balancing is based on the* colors of the nodes and not on their contents, assuring that even when* the tree gets "corrupted" it won't get out of balance, so scanning time* remains the same (also, searching and inserting nodes in an rbtree uses* the same algorithm, so we have no overhead when we flush and rebuild).* 4) KSM never flushes the stable tree, which means that even if it were to* take 10 attempts to find a page in the unstable tree, once it is found,* it is secured in the stable tree. (When we scan a new page, we first* compare it against the stable tree, and then against the unstable tree.)** If the merge_across_nodes tunable is unset, then KSM maintains multiple* stable trees and multiple unstable trees: one of each for each NUMA node.*//*** struct mm_slot - ksm information per mm that is being scanned* @link: link to the mm_slots hash list* @mm_list: link into the mm_slots list, rooted in ksm_mm_head* @rmap_list: head for this mm_slot's singly-linked list of rmap_items* @mm: the mm that this information is valid for*/struct mm_slot {struct hlist_node link;------------------用于添加到mm_slot哈希表中。struct list_head mm_list;----------------用于添加到mm_slot链表中,链表头在ksm_mm_headstruct rmap_item *rmap_list;-------------rmap_item链表头struct mm_struct *mm;--------------------进程的mm_sturct数据结构};

ksm_scan表示当前扫描状态。

/*** struct ksm_scan - cursor for scanning* @mm_slot: the current mm_slot we are scanning* @address: the next address inside that to be scanned* @rmap_list: link to the next rmap to be scanned in the rmap_list* @seqnr: count of completed full scans (needed when removing unstable node)** There is only the one ksm_scan instance of this cursor structure.*/struct ksm_scan {struct mm_slot *mm_slot;---------------------当前正在扫描的mm_slotunsigned long address;-----------------------下一次扫描地址struct rmap_item **rmap_list;----------------将要扫描rmap_item的指针unsigned long seqnr;-------------------------全部扫描完成后会计数一次,用于删除unstable节点。};

1.3 madvise触发唤醒KSM内核线程

madvise用于给内核处理内存paging I/O建议,和KSM相关的是MADV_MERGEABLE和MADV_UNMERGEABLE。

MADV_MERGEABLE用于显式调用处理用户进程地址空间合并功能。

MADV_UNMERGEABLE用于显式调用取消一个用户进程地址空间合并功能。

madvise-------------------------------------madvise系统调用madvise_vmamadvise_behaviorksm_madvise---------------------处理MADV_MERGEABLE/MADV_UNMERGEABLE情况__ksm_enter-----------------MADV_MERGEABLE,唤醒ksmd线程unmerge_ksm_pages-----------MADV_UNMERGEABLE

__ksm_enter首先创建mm_slot,然后唤醒ksmd内核线程进行KSM处理。

int __ksm_enter(struct mm_struct *mm){struct mm_slot *mm_slot;int needs_wakeup;mm_slot = alloc_mm_slot();-----------------------------------------------分配一个mm_slot,表示当前进程mm_struct数据结构if (!mm_slot)return -ENOMEM;/* Check ksm_run too? Would need tighter locking */needs_wakeup = list_empty(&ksm_mm_head.mm_list);--------------------------为空表示当前没有正在被扫描的mm_slotspin_lock(&ksm_mmlist_lock);insert_to_mm_slots_hash(mm, mm_slot);-------------------------------------将当前进程mm赋给mm_slot->mm/** When KSM_RUN_MERGE (or KSM_RUN_STOP),* insert just behind the scanning cursor, to let the area settle* down a little; when fork is followed by immediate exec, we don't* want ksmd to waste time setting up and tearing down an rmap_list.** But when KSM_RUN_UNMERGE, it's important to insert ahead of its* scanning cursor, otherwise KSM pages in newly forked mms will be* missed: then we might as well insert at the end of the list.*/if (ksm_run & KSM_RUN_UNMERGE)list_add_tail(&mm_slot->mm_list, &ksm_mm_head.mm_list);elselist_add_tail(&mm_slot->mm_list, &ksm_scan.mm_slot->mm_list);---------mm_slot添加到ksm_scan.mm_slot->mm_list链表中spin_unlock(&ksm_mmlist_lock);set_bit(MMF_VM_MERGEABLE, &mm->flags);------------------------------------表示这个进程已经添加到KSM系统中atomic_inc(&mm->mm_count);if (needs_wakeup)wake_up_interruptible(&ksm_thread_wait);------------------------------如果之前为空,则唤醒ksmd内核线程。return 0;}

1.4 内核线程ksmd

static DECLARE_WAIT_QUEUE_HEAD(ksm_thread_wait);

定义ksm_thread_wait等待队列,ksmd内核线程在此阻塞等待,madvise唤醒。

ksm_init创建ksmd内核线程,

static int __init ksm_init(void){struct task_struct *ksm_thread;int err;err = ksm_slab_init();-----------------------------------------------创建ksm_rmap_item、ksm_stable_node、ksm_mm_slot三个高速缓存。if (err)goto out;ksm_thread = kthread_run(ksm_scan_thread, NULL, "ksmd");------------创建ksmd内核线程,处理函数为ksm_scan_thread。if (IS_ERR(ksm_thread)) {pr_err("ksm: creating kthread failed\n");err = PTR_ERR(ksm_thread);goto out_free;}#ifdef CONFIG_SYSFSerr = sysfs_create_group(mm_kobj, &ksm_attr_group);-----------------在sys/kernel/mm下创建ksm相关节点if (err) {pr_err("ksm: register sysfs failed\n");kthread_stop(ksm_thread);goto out_free;}#elseksm_run = KSM_RUN_MERGE; /* no way for user to start it */#endif/* CONFIG_SYSFS */#ifdef CONFIG_MEMORY_HOTREMOVE/* There is no significance to this priority 100 */hotplug_memory_notifier(ksm_memory_callback, 100);#endifreturn 0;out_free:ksm_slab_free();out:return err;}

sysfs相关层次为kernel_obj-->mm_obj-->ksm_attr_group,其中ksm_attr_group的.attrs为ksm_attrs。

创建节点/sys/kernel/mm/ksm如下,这些节点提供给用户对KSM进行控制。

下面是节点名、值、解释:

full_scans----------------0:只读,已经全扫描可合并区域次数pages_shared----------0:ksm_pages_shared,stable树中节点数pages_sharing---------0:ksm_pages_sharing,pages_to_scan-----100:ksm_thread_pages_to_scan,一次ksm_do_scan的页面数pages_unshared------0:ksm_pages_unshared,unstable树中节点数pages_volatile---------0:改变太频繁的页面数run------------------------0:0-停止ksmd但保持已合并页面状态;1-运行ksmd;2-停止ksmd并且将已经合并页面拆分。sleep_millisecs-------20:ksm_thread_sleep_millisecs,每次KSM扫描之间的间隔时间

关于pages_shared、pages_sharing和page_unshared比例说明什么呢?

pages_sharing/pages_shared比例越高表示页面共享情况越好;pages_unshared/pages_sharing比例越高表示KSM收效很低。

ksm_scan_thread()是ksmd内核线程主干,每次执行ksm_do_scan()函数去扫描合并pages_to_scan个页面,然后睡眠等待sleep_millisecs毫秒。

如果无事可做会在ksm_thread_wait上等待,知道madvise唤醒。

static int ksm_scan_thread(void *nothing){set_freezable();set_user_nice(current, 5);while (!kthread_should_stop()) {mutex_lock(&ksm_thread_mutex);wait_while_offlining();if (ksmd_should_run())ksm_do_scan(ksm_thread_pages_to_scan);mutex_unlock(&ksm_thread_mutex);try_to_freeze();if (ksmd_should_run()) {schedule_timeout_interruptible(msecs_to_jiffies(ksm_thread_sleep_millisecs));} else {wait_event_freezable(ksm_thread_wait,ksmd_should_run() || kthread_should_stop());}}return 0;}

ksm_do_scan是ksmd线程的实际执行者,它有着如下流程,将一个页面合并成KSM页面,包括查找stabke树和unstable树等,然后进行合并操作。

ksm_do_scanscan_get_next_rmap_item---------------------选取合适的匿名页面 cmp_and_merge_page--------------------------将页面与root_stable_tree/root_unstable_tree中页面进行比较,判断是否能合并 stable_tree_search----------------------搜索stable红黑树并查找是否有和page内容一致的节点 try_to_merge_with_ksm_page--------------尝试将候选页合并到KSM页面中 stable_tree_append unstable_tree_search_insert-------------搜索unstable红黑树中是否有和该页内容相同的节点 try_to_merge_two_pages------------------若在unstable红黑树中找到和当前页内容相同节点,尝试合并这两页面成为一个KSM页面 stable_tree_append----------------------将合并的两个页面对应rmap_item添加到stable节点哈希表中 break_cow

ksm_do_scan()函数如下:

staticvoidksm_do_scan(unsignedint scan_npages){struct rmap_item *rmap_item;struct page *uninitialized_var(page);while (scan_npages-- && likely(!freezing(current))) {----------------while中尝试去合并scan_npages个页面cond_resched();rmap_item = scan_get_next_rmap_item(&page);----------------------获取一个合适的匿名页面pageif (!rmap_item)return;cmp_and_merge_page(page, rmap_item);-----------------------------让page在KSM的stable和unstable两棵树中查找是否有合适合并的对象,并尝试去合并他们。put_page(page);}}

scan_get_next_rmap_item()遍历ksm_mm_heand,然后再遍历进程地址空间的每个VMA,然后通过get_next_rmpa_item()返回rmap_item。

static struct rmap_item *scan_get_next_rmap_item(struct page **page){struct mm_struct *mm;struct mm_slot *slot;struct vm_area_struct *vma;struct rmap_item *rmap_item;int nid;if (list_empty(&ksm_mm_head.mm_list))---------------------------------------为空表示没有mm_slot,所以不需要继续return NULL;slot = ksm_scan.mm_slot;if (slot == &ksm_mm_head) {-------------------------------------------------第一次运行ksmd,进行一些初始化工作。...if (!ksm_merge_across_nodes) {struct stable_node *stable_node;struct list_head *this, *next;struct page *page;list_for_each_safe(this, next, &migrate_nodes) {stable_node = list_entry(this,struct stable_node, list);page = get_ksm_page(stable_node, false);if (page)put_page(page);cond_resched();}}for (nid = 0; nid < ksm_nr_node_ids; nid++)root_unstable_tree[nid] = RB_ROOT;----------------------------------unstable树初始化spin_lock(&ksm_mmlist_lock);slot = list_entry(slot->mm_list.next, struct mm_slot, mm_list);ksm_scan.mm_slot = slot;spin_unlock(&ksm_mmlist_lock);/** Although we tested list_empty() above, a racing __ksm_exit* of the last mm on the list may have removed it since then.*/if (slot == &ksm_mm_head)return NULL;next_mm:ksm_scan.address = 0;ksm_scan.rmap_list = &slot->rmap_list;}mm = slot->mm;down_read(&mm->mmap_sem);if (ksm_test_exit(mm))vma = NULL;elsevma = find_vma(mm, ksm_scan.address);for (; vma; vma = vma->vm_next) {-------------------------------------------for循环遍历所有VMAif (!(vma->vm_flags & VM_MERGEABLE))continue;if (ksm_scan.address < vma->vm_start)ksm_scan.address = vma->vm_start;if (!vma->anon_vma)ksm_scan.address = vma->vm_end;while (ksm_scan.address < vma->vm_end) {-------------------------------扫描VMA中所有虚拟页面if (ksm_test_exit(mm))break;*page = follow_page(vma, ksm_scan.address, FOLL_GET);--------------follow_page函数从虚拟地址开始找回normal mapping页面的struct page数据结构if (IS_ERR_OR_NULL(*page)) {ksm_scan.address += PAGE_SIZE;cond_resched();continue;}if (PageAnon(*page) ||page_trans_compound_anon(*page)) {-----------------------------只处理匿名页面情况flush_anon_page(vma, *page, ksm_scan.address);flush_dcache_page(*page);rmap_item = get_next_rmap_item(slot,---------------------------去找mm_slot->rmap_list链表上是否有该虚拟地址对应的rmap_item,没有找到就新建一个。ksm_scan.rmap_list, ksm_scan.address);if (rmap_item) {ksm_scan.rmap_list =&rmap_item->rmap_list;ksm_scan.address += PAGE_SIZE;} elseput_page(*page);up_read(&mm->mmap_sem);return rmap_item;}put_page(*page);ksm_scan.address += PAGE_SIZE;cond_resched();}}if (ksm_test_exit(mm)) {-------------------------------------------------说明for循环里扫描该进程所有的VMA都没找到合适的匿名页面ksm_scan.address = 0;ksm_scan.rmap_list = &slot->rmap_list;}/** Nuke all the rmap_items that are above this current rmap:* because there were no VM_MERGEABLE vmas with such addresses.*/remove_trailing_rmap_items(slot, ksm_scan.rmap_list);--------------------在该进程中没找到合适的匿名页面时,那么对应的rmap_item已经没用必要占用空间,直接删除。spin_lock(&ksm_mmlist_lock);ksm_scan.mm_slot = list_entry(slot->mm_list.next,-----------------------取下一个mm_slotstruct mm_slot, mm_list);if (ksm_scan.address == 0) {--------------------------------------------处理该进程被销毁的情况,把mm_slot从ksm_mm_head链表中删除,释放mm_slot数据结构,清MMF_VM_MERGEABLE标志位。/** We've completed a full scan of all vmas, holding mmap_sem* throughout, and found no VM_MERGEABLE: so do the same as* __ksm_exit does to remove this mm from all our lists now.* This applies either when cleaning up after __ksm_exit* (but beware: we can reach here even before __ksm_exit),* or when all VM_MERGEABLE areas have been unmapped (and* mmap_sem then protects against race with MADV_MERGEABLE).*/hash_del(&slot->link);list_del(&slot->mm_list);spin_unlock(&ksm_mmlist_lock);free_mm_slot(slot);clear_bit(MMF_VM_MERGEABLE, &mm->flags);up_read(&mm->mmap_sem);mmdrop(mm);} else {spin_unlock(&ksm_mmlist_lock);up_read(&mm->mmap_sem);}/* Repeat until we've completed scanning the whole list */slot = ksm_scan.mm_slot;if (slot != &ksm_mm_head)goto next_mm;----------------------------------------继续扫描下一个mm_slotksm_scan.seqnr++;----------------------------------------扫描完一轮mm_slot,增加计数return NULL;}

cmp_and_merge_page()有两个参数,page表示刚才扫描mm_slot时找到的一个合格匿名页面,rmap_item表示该page对应的rmap_item数据结构。

staticvoidcmp_and_merge_page(struct page *page, struct rmap_item *rmap_item){struct rmap_item *tree_rmap_item;struct page *tree_page = NULL;struct stable_node *stable_node;struct page *kpage;unsigned int checksum;int err;stable_node = page_stable_node(page);if (stable_node) {if (stable_node->head != &migrate_nodes &&get_kpfn_nid(stable_node->kpfn) != NUMA(stable_node->nid)) {rb_erase(&stable_node->node,root_stable_tree + NUMA(stable_node->nid));stable_node->head = &migrate_nodes;list_add(&stable_node->list, stable_node->head);}if (stable_node->head != &migrate_nodes &&rmap_item->head == stable_node)return;}/* We first start with searching the page inside the stable tree */kpage = stable_tree_search(page);------------------------------------------在root_stabletree中查找页面内容和page相同的stable页。if (kpage == page && rmap_item->head == stable_node) {---------------------说明kpage和page是同一个页面,说明该页已经是KSM页面,不需继续处理。put_page(kpage);return;}remove_rmap_item_from_tree(rmap_item);if (kpage) {err = try_to_merge_with_ksm_page(rmap_item, page, kpage);--------------如果在stable书中找到一个页面内容相同节点,那么尝试合并这个页面到节点上if (!err) {/** The page was successfully merged:* add its rmap_item to the stable tree.*/lock_page(kpage);stable_tree_append(rmap_item, page_stable_node(kpage));------------合并成功后,把rmap_item添加到stable_node->hlist哈希链表上。unlock_page(kpage);}put_page(kpage);return;}============================================================stable和unstable分割线========================================================================/** If the hash value of the page has changed from the last time* we calculated it, this page is changing frequently: therefore we* don't want to insert it in the unstable tree, and we don't want* to waste our time searching for something identical to it there.*/checksum = calc_checksum(page);--------------------------------------------再次计算校验值,如不等,则说明页面变动频繁,不适合添加到unstable红黑树中。if (rmap_item->oldchecksum != checksum) {rmap_item->oldchecksum = checksum;return;}tree_rmap_item =unstable_tree_search_insert(rmap_item, page, &tree_page);--------------搜索root_unstable_tree中是否有和该页面内容相同的节点。if (tree_rmap_item) {kpage = try_to_merge_two_pages(rmap_item, page,tree_rmap_item, tree_page);----------------------------尝试合并page和tree_page成为一个KSM页面kpage。put_page(tree_page);if (kpage) {/** The pages were successfully merged: insert new* node in the stable tree and add both rmap_items.*/lock_page(kpage);stable_node = stable_tree_insert(kpage);---------------------------将kpage添加到root_stable_tree中,创建一个新stable_node节点。if (stable_node) {stable_tree_append(tree_rmap_item, stable_node);stable_tree_append(rmap_item, stable_node);}unlock_page(kpage);/** If we fail to insert the page into the stable tree,* we will have 2 virtual addresses that are pointing* to a ksm page left outside the stable tree,* in which case we need to break_cow on both.*/if (!stable_node) {------------------------------------------------如果stable_node插入到stable树失败,那么调用break_cow()主动触发一个却也中断来分离这个KSM页面。break_cow(tree_rmap_item);break_cow(rmap_item);}}}}

二、匿名页面和KSM页面的区别

2.1 如何区分匿名页面和KSM页面?

如果struct page指向映射到用户虚拟内存空间的匿名页面,那么其成员mapping指向anon_vma。

mapping的低2位,表示匿名页面或者KSM。

/*...** PAGE_MAPPING_KSM without PAGE_MAPPING_ANON is currently never used.----------------所以说KSM肯定是匿名页面,KSM是匿名页面的子集。...*/#define PAGE_MAPPING_ANON 1#define PAGE_MAPPING_KSM 2#define PAGE_MAPPING_FLAGS (PAGE_MAPPING_ANON | PAGE_MAPPING_KSM)

内核中提供两个函数检查页面的KSM或者匿名页面类型:

static inline int PageAnon(struct page *page){return ((unsigned long)page->mapping & PAGE_MAPPING_ANON) != 0;}static inline int PageKsm(struct page *page)-----------------------------------------也可以看出KSM页面需同时具备ANON/KSM。{return ((unsigned long)page->mapping & PAGE_MAPPING_FLAGS) ==(PAGE_MAPPING_ANON | PAGE_MAPPING_KSM);}

2.2 KSM页面和匿名页面的区别

分两种情况,一是父子进程VMA共享同一个匿名页面;二是不相干的进程VMA共享同一个匿名页面。

父进程在VMA映射匿名页面是会创建属于这个VMA的RMAP反向映射的设施,在__page_set_anon_rmap()例会设置page->index值为虚拟地址在VMA中的offset。

子进程fork时,复制了父进程的VMA内容到子进程的VMA中,并且复制父进程的页表到子进程中,因此对于父子进程来说,page->index值是一致的。

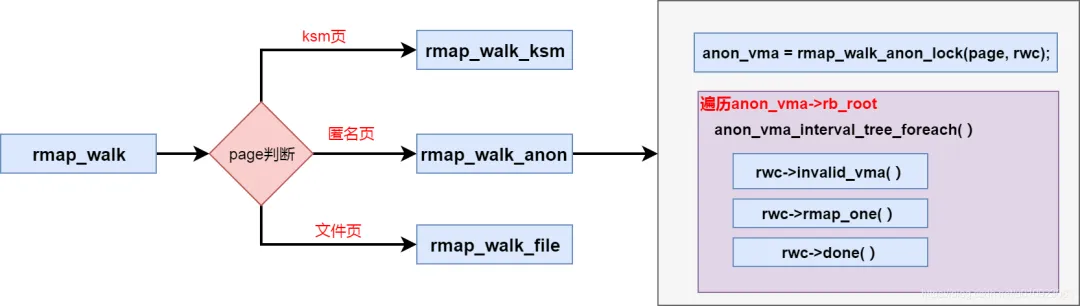

当需要从page找到所有映射page的虚拟地址时,在rmap_walk_anon()函数中,父子进程都是用page->index来计算在VMA中的虚拟地址。

在rmap_walk()中,如果是非KSM的匿名页面,使用rmap_walk_anon进行反向查找。

staticintrmap_walk_anon(struct page *page, struct rmap_walk_control *rwc){...pgoff = page_to_pgoff(page);anon_vma_interval_tree_foreach(avc, &anon_vma->rb_root, pgoff, pgoff) {struct vm_area_struct *vma = avc->vma;unsigned long address = vma_address(page, vma);----------------------根据page->index计算虚拟地址if (rwc->invalid_vma && rwc->invalid_vma(vma, rwc->arg))continue;ret = rwc->rmap_one(page, vma, address, rwc->arg);if (ret != SWAP_AGAIN)break;if (rwc->done && rwc->done(page))break;}anon_vma_unlock_read(anon_vma);return ret;}

KSM页面由内容相同的两个页面合并而成,他们可以是不同进程的VMA,也可以是父子进程的VMA。

intrmap_walk_ksm(struct page *page, struct rmap_walk_control *rwc){...again:hlist_for_each_entry(rmap_item, &stable_node->hlist, hlist) {struct anon_vma *anon_vma = rmap_item->anon_vma;struct anon_vma_chain *vmac;struct vm_area_struct *vma;anon_vma_lock_read(anon_vma);anon_vma_interval_tree_foreach(vmac, &anon_vma->rb_root,0, ULONG_MAX) {...ret = rwc->rmap_one(page, vma,rmap_item->address, rwc->arg);---------------------------使用rmap_item->address来获取每个VMA对应的虚拟地址...}}anon_vma_unlock_read(anon_vma);}...}

因此对于KSM页面来说,page->index等于第一次映射该页的VMA中的offset。

此图来源:CarlyleLiu

Linux内存管理系列文章:

原作者:ArnoldLu

原文地址:

https://www.cnblogs.com/arnoldlu/p/8335541.html