Linux内存管理:缺页中断处理

- 2026-03-21 06:42:47

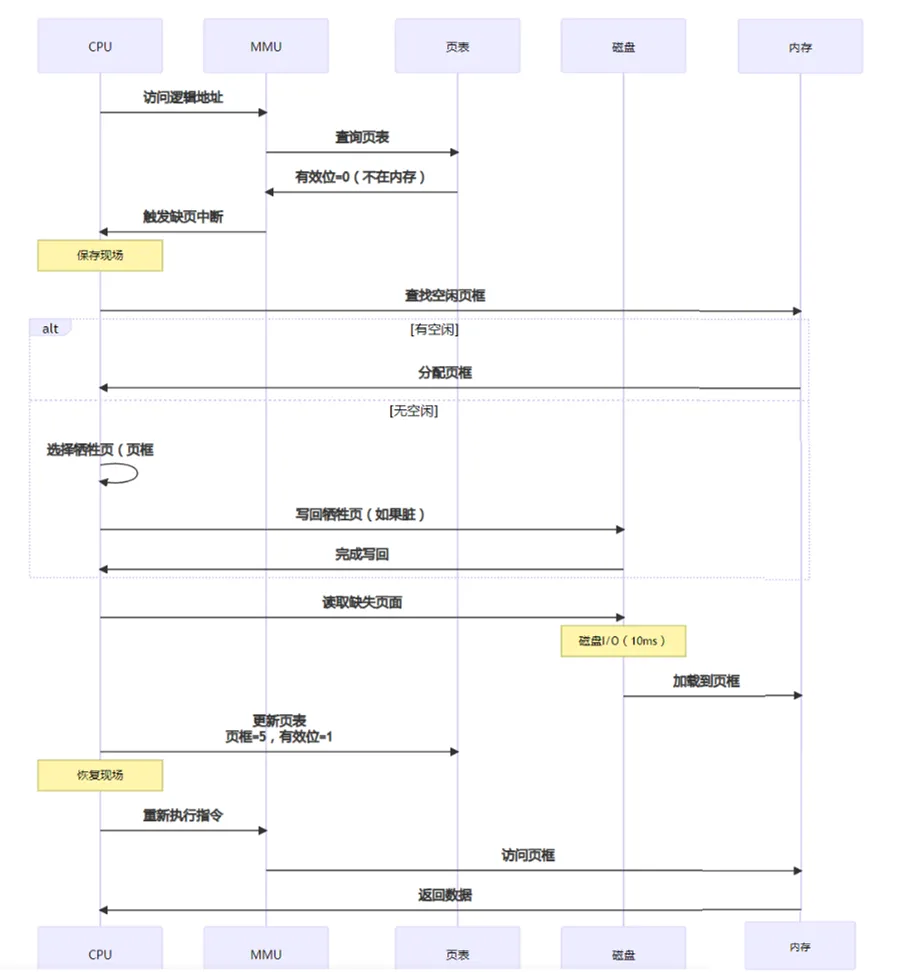

当进程访问这些没有建立映射关系的虚拟内存时,处理器自动触发一个缺页异常。

缺页异常是Linux内存管理中最复杂和重要的一部分,需要考虑很多相关细节,包括匿名页面、KSM页面、page cache页面、写时复制、私有映射和共享映射等。

一、ARMv7-A缺页异常

当有中断到来时,硬件会做一些处理;对于软件来说,要做的事情是从中断向量表开始。

__vectors_start是中断异常处理的起点,具体到缺页异常路径是:

vectors_start-->vector_dabt-->dabt_usr/__dabt_svc-->dabt_helper-->v7_early_abort-->do_DataAbort-->fsr_info-->do_translation_fault/do_page_fault/do_sect_fault。

重点是do_page_fault。

.section .vectors, "ax", %progbits__vectors_start:W(b) vector_rstW(b) vector_undW(ldr) pc, __vectors_start + 0x1000W(b) vector_pabtW(b) vector_dabt--------------------------数据异常向量W(b) vector_addrexcptnW(b) vector_irqW(b) vector_fiq

data abort只能出现在user mode和svc mode两种模式下,其他模式下无效。

两者都通过dabt_helper进行处理。

/** Data abort dispatcher* Enter in ABT mode, spsr = USR CPSR, lr = USR PC*/vector_stub dabt, ABT_MODE, 8.long __dabt_usr @ 0 (USR_26 / USR_32).long __dabt_invalid @ 1 (FIQ_26 / FIQ_32).long __dabt_invalid @ 2 (IRQ_26 / IRQ_32).long __dabt_svc @ 3 (SVC_26 / SVC_32)...__dabt_usr:usr_entrykuser_cmpxchg_checkmov r2, spdabt_helperb ret_from_exceptionUNWIND(.fnend )ENDPROC(__dabt_usr)__dabt_svc:svc_entrymov r2, spdabt_helperTHUMB( ldr r5, [sp, #S_PSR] ) @ potentially updated CPSRsvc_exit r5 @ return from exceptionUNWIND(.fnend )ENDPROC(__dabt_svc).macro dabt_helper@@ Call the processor-specific abort handler:@@ r2 - pt_regs@ r4 - aborted context pc@ r5 - aborted context psr@@ The abort handler must return the aborted address in r0, and@ the fault status register in r1. r9 must be preserved.@#ifdef MULTI_DABORTldr ip, .LCprocfnsmov lr, pcldr pc, [ip, #PROCESSOR_DABT_FUNC]#elsebl CPU_DABORT_HANDLER--------------------------------指向v7_early_abort#endif.endm

FSR和FAR是从CP15寄存器中读取的参数:

用于系统存储管理的协处理器CP15

MCR{cond} coproc,opcode1,Rd,CRn,CRm,opcode2

MRC {cond} coproc,opcode1,Rd,CRn,CRm,opcode2

coproc 指令操作的协处理器名.标准名为pn,n,为0~15

opcode1 协处理器的特定操作码. 对于CP15寄存器来说,opcode1永远为0,不为0时,操作结果不可预知

CRd 作为目标寄存器的协处理器寄存器.

CRn 存放第1个操作数的协处理器寄存器.

CRm 存放第2个操作数的协处理器寄存器. (用来区分同一个编号的不同物理寄存器,当不需要提供附加信息时,指定为C0)

opcode2 可选的协处理器特定操作码. (用来区分同一个编号的不同物理寄存器,当不需要提供附加信息时,指定为0)

| Register(寄存器) | Read | Write | |

|---|---|---|---|

| C0 | ID Code (1) | Unpredictable | |

| C0 | Catch type(1) | Unpredictable | |

| C1 | Control | Control | |

| C2 | Translation table base | Translation table base | |

| C3 | Domain access control | Domain access control | |

| C4 | Unpredictable | Unpredictable | |

| C5 | Fault status(2) | Fault status (2) | |

| C6 | Fault address | Fault address | |

| C7 | Unpredictable | Cache operations | |

| C8 | Unpredictable | TLB operations | |

| C9 | Cache lockdown(2) | Cache lockdown (2) | |

| C10 | TLB lock down(2) | TLB lock down(2) | |

| C11 | Unpredictable | Unpredictable | |

| C12 | Unpredictable | Unpredictable | |

| C13 | Process ID | Process ID | |

| C14 | Unpredictable | Unpredictable | |

| C15 | Test configuration | Test configuration |

从上面的解释可知,取CP15的c6和c5放入r0和r1,分别表示FAR和FSR。

ENTRY(v7_early_abort)mrc p15, 0, r1, c5, c0, 0 @ get FSRmrc p15, 0, r0, c6, c0, 0 @ get FAR...b do_DataAbortENDPROC(v7_early_abort)

从v7_early_abort可知,addr是从CP15的c6获取,fsr是从CP15的c5获取;regs在dabt_usr/dabt_svc中从sp获取。

/** Dispatch a data abort to the relevant handler.*/asmlinkage void __exceptiondo_DataAbort(unsignedlong addr, unsignedint fsr, struct pt_regs *regs){const struct fsr_info *inf = fsr_info + fsr_fs(fsr);----------------根据fsr从fsr_info中找到对应的处理函数。struct siginfo info;if (!inf->fn(addr, fsr & ~FSR_LNX_PF, regs))------------------------根据fsr进行处理return;pr_alert("Unhandled fault: %s (0x%03x) at 0x%08lx\n",---------------下面都是无法处理的异常inf->name, fsr, addr);show_pte(current->mm, addr);info.si_signo = inf->sig;info.si_errno = 0;info.si_code = inf->code;info.si_addr = (void __user *)addr;arm_notify_die("", regs, &info, fsr, 0);}

fsr_fs将fsr转换到fsr_info下表,从而获取对应的错误类型处理函数。

staticinlineintfsr_fs(unsignedint fsr){return (fsr & FSR_FS3_0) | (fsr & FSR_FS4) >> 6;------------取fsr低4位;再与fsr第11位,然后右移6位,最后再与低4位或。}

fsr_info数组中对不同FSR类型规定了不同处理手段:

static struct fsr_info fsr_info[] = {/** The following are the standard ARMv3 and ARMv4 aborts. ARMv5* defines these to be "precise" aborts.*/...{ do_translation_fault, SIGSEGV, SEGV_MAPERR, "section translation fault" },-----段转换错误,即找不到二级页表{ do_bad, SIGBUS, 0, "external abort on linefetch" },{ do_page_fault, SIGSEGV, SEGV_MAPERR, "page translation fault" },---------------页表错误,没有对应的物理地址{ do_bad, SIGBUS, 0, "external abort on non-linefetch" },{ do_bad, SIGSEGV, SEGV_ACCERR, "section domain fault" },{ do_bad, SIGBUS, 0, "external abort on non-linefetch" },{ do_bad, SIGSEGV, SEGV_ACCERR, "page domain fault" },{ do_bad, SIGBUS, 0, "external abort on translation" },{ do_sect_fault, SIGSEGV, SEGV_ACCERR, "section permission fault" },-------------段权限错误,二级页表权限错误{ do_bad, SIGBUS, 0, "external abort on translation" },{ do_page_fault, SIGSEGV, SEGV_ACCERR, "page permission fault" },------------页权限错误...}

二、do_page_fault

do_page_fault是缺页中断的核心函数,主要工作交给do_page_fault处理,然后进行一些异常处理do_kernel_fault和__do_user_fault。

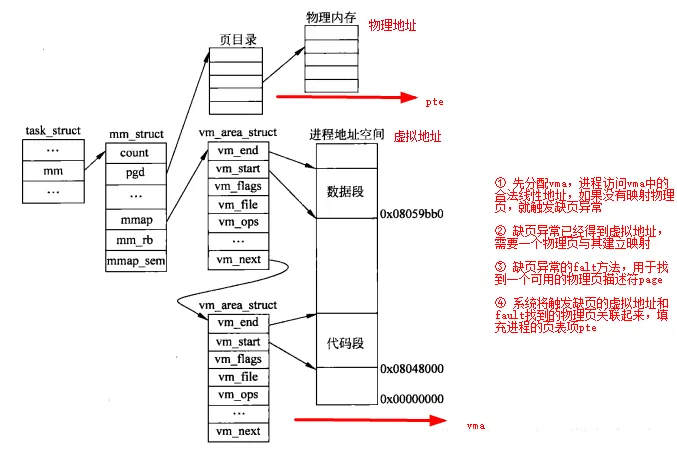

__do_page_fault查找合适的vma,然后主要工作交给handle_mm_fault;handle_mm_fault的核心又是handle_pte_fault。

handle_pte_fault中根据也是否存在分为两类:do_fault(文件映射缺页中断)、do_anonymous_page(匿名页面缺页中断)、do_swap_page()和do_wp_page(写时复制)。

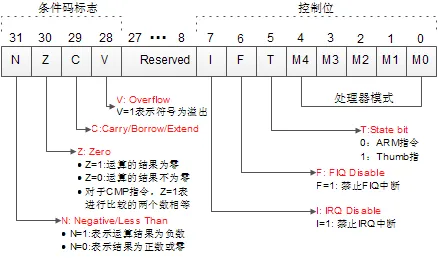

staticint __kprobesdo_page_fault(unsignedlong addr, unsignedint fsr, struct pt_regs *regs){struct task_struct *tsk;struct mm_struct *mm;int fault, sig, code;unsigned int flags = FAULT_FLAG_ALLOW_RETRY | FAULT_FLAG_KILLABLE;if (notify_page_fault(regs, fsr))return 0;tsk = current;-------------------------------------------获取当前进程的task_structmm = tsk->mm;-------------------------------------------获取进程内存管理结构体mm_struct/* Enable interrupts if they were enabled in the parent context. */if (interrupts_enabled(regs))local_irq_enable();/** If we're in an interrupt or have no user* context, we must not take the fault..*/if (in_atomic() || !mm)----------------------------------in_atomic判断当前状态是否处于中断上下文或者禁止抢占,如果是跳转到no_context;如果当前进程没有mm,说明是一个内核线程,跳转到no_context。goto no_context;if (user_mode(regs))flags |= FAULT_FLAG_USER;if (fsr & FSR_WRITE)flags |= FAULT_FLAG_WRITE;/** As per x86, we may deadlock here. However, since the kernel only* validly references user space from well defined areas of the code,* we can bug out early if this is from code which shouldn't.*/if (!down_read_trylock(&mm->mmap_sem)) {if (!user_mode(regs) && !search_exception_tables(regs->ARM_pc))----------发生在内核空间,且没有在exception tables查询到该地址,跳转到no_context。goto no_context;retry:down_read(&mm->mmap_sem);---------------------------用户空间则睡眠等待锁持有者释放锁。} else {/** The above down_read_trylock() might have succeeded in* which case, we'll have missed the might_sleep() from* down_read()*/might_sleep();#ifdef CONFIG_DEBUG_VMif (!user_mode(regs) &&!search_exception_tables(regs->ARM_pc))goto no_context;#endif}fault = __do_page_fault(mm, addr, fsr, flags, tsk);/* If we need to retry but a fatal signal is pending, handle the* signal first. We do not need to release the mmap_sem because* it would already be released in __lock_page_or_retry in* mm/filemap.c. */if ((fault & VM_FAULT_RETRY) && fatal_signal_pending(current))return 0;/** Major/minor page fault accounting is only done on the* initial attempt. If we go through a retry, it is extremely* likely that the page will be found in page cache at that point.*/perf_sw_event(PERF_COUNT_SW_PAGE_FAULTS, 1, regs, addr);if (!(fault & VM_FAULT_ERROR) && flags & FAULT_FLAG_ALLOW_RETRY) {if (fault & VM_FAULT_MAJOR) {tsk->maj_flt++;perf_sw_event(PERF_COUNT_SW_PAGE_FAULTS_MAJ, 1,regs, addr);} else {tsk->min_flt++;perf_sw_event(PERF_COUNT_SW_PAGE_FAULTS_MIN, 1,regs, addr);}if (fault & VM_FAULT_RETRY) {/* Clear FAULT_FLAG_ALLOW_RETRY to avoid any risk* of starvation. */flags &= ~FAULT_FLAG_ALLOW_RETRY;flags |= FAULT_FLAG_TRIED;goto retry;}}up_read(&mm->mmap_sem);/** Handle the "normal" case first - VM_FAULT_MAJOR / VM_FAULT_MINOR*/if (likely(!(fault & (VM_FAULT_ERROR | VM_FAULT_BADMAP | VM_FAULT_BADACCESS))))----没有错误,说明缺页中断处理完成。return 0;/** If we are in kernel mode at this point, we* have no context to handle this fault with.*/if (!user_mode(regs))-----------------------------------判断CPSR寄存器的低4位,CPSR的低5位表示当前所处的模式。如果低4位位0,则处于用户态。见下面CPSRM4~M0细节。goto no_context;------------------------------------进行内核空间错误处理if (fault & VM_FAULT_OOM) {/** We ran out of memory, call the OOM killer, and return to* userspace (which will retry the fault, or kill us if we* got oom-killed)*/pagefault_out_of_memory();--------------------------进行OOM处理,然后返回。return 0;}if (fault & VM_FAULT_SIGBUS) {/** We had some memory, but were unable to* successfully fix up this page fault.*/sig = SIGBUS;code = BUS_ADRERR;} else {/** Something tried to access memory that* isn't in our memory map..*/sig = SIGSEGV;code = fault == VM_FAULT_BADACCESS ?SEGV_ACCERR : SEGV_MAPERR;}__do_user_fault(tsk, addr, fsr, sig, code, regs);------用户模式下错误处理,通过给用户进程发信号:SIGBUS/SIGSEGV。return 0;no_context:__do_kernel_fault(mm, addr, fsr, regs);----------------错误发生在内核模式,如果内核无法处理,此处产生oops错误。return 0;}

CPSR是当前程序状态寄存器的意思,格式如下:

其中M[4:0]的解释如下,可以看出user_mode判断的低4位,都为0即为用户模式。

| M[4:0]内容 | 处理器模式 | ARM模式可访问的寄存器 | THUMB模式可访问的寄存器 |

|---|---|---|---|

| 0b10000 | 用户模式 | PC,CPSR,R0~R14 | PC,CPSR,R0~R7,LR,SP |

| 0b10001 | FIQ模式 | PC,CPSR,SPSR_fiq,R14_fiq~R8_fiq,R0~R7 | PC,CPSR,SPSR_fiq,LR_fiq,SP_fiq,R0~R7 |

| 0b10010 | IRQ模式 | PC,CPSR,SPSR_irq,R14_irq~R13_irq,R0~R12 | PC,CPSR,SPSR_irq,LR_irq,SP_irq,R0~R7 |

| 0b10011 | 管理模式 | PC,CPSR,SPSR_svc,R14_svc~R13_svc,R0~R12 | PC,CPSR,SPSR_svc,LR_svc,SP_svc,R0~R7 |

| 0b10111 | 中止模式 | PC,CPSR,SPSR_abt,R14_abt~R13_abt,R0~R12 | PC,CPSR,SPSR_abt,LR_abt,SP_abt,R0~R7 |

| 0b11011 | 未定义模式 | PC,CPSR,SPSR_und,R14_und~R13_und,R0~R12 | PC,CPSR,SPSR_und,LR_und,SP_und,R0~R7 |

| 0b11111 | 系统模式 | PC,CPSR,R0~R14 | PC,CPSR,LR,SP,R0~R7 |

__do_page_fault返回VM_FAULT_XXX类型的错误。

/** Different kinds of faults, as returned by handle_mm_fault().* Used to decide whether a process gets delivered SIGBUS or* just gets major/minor fault counters bumped up.*/#define VM_FAULT_MINOR 0 /* For backwards compat. Remove me quickly. */#define VM_FAULT_OOM 0x0001#define VM_FAULT_SIGBUS 0x0002#define VM_FAULT_MAJOR 0x0004#define VM_FAULT_WRITE 0x0008 /* Special case for get_user_pages */#define VM_FAULT_HWPOISON 0x0010 /* Hit poisoned small page */#define VM_FAULT_HWPOISON_LARGE 0x0020 /* Hit poisoned large page. Index encoded in upper bits */#define VM_FAULT_SIGSEGV 0x0040#define VM_FAULT_NOPAGE 0x0100 /* ->fault installed the pte, not return page */#define VM_FAULT_LOCKED 0x0200 /* ->fault locked the returned page */#define VM_FAULT_RETRY 0x0400 /* ->fault blocked, must retry */#define VM_FAULT_FALLBACK 0x0800 /* huge page fault failed, fall back to small */#define VM_FAULT_HWPOISON_LARGE_MASK 0xf000 /* encodes hpage index for large hwpoison */#define VM_FAULT_ERROR (VM_FAULT_OOM | VM_FAULT_SIGBUS | VM_FAULT_SIGSEGV | \VM_FAULT_HWPOISON | VM_FAULT_HWPOISON_LARGE | \VM_FAULT_FALLBACK)#define VM_FAULT_BADMAP 0x010000#define VM_FAULT_BADACCESS 0x020000

do_page_fault调用do_page_fault,do_page_fault首先根据addr查找VMA,然后交给handle_mm_fault进行处理。

handle_mm_fault调用handle_mm_fault,handle_mm_fault进行从PGD-->PUD-->PMD-->PTE的处理。对于二级映射来说,最主要的PTE的处理交给handle_pte_fault。

static int __kprobes__do_page_fault(struct mm_struct *mm, unsigned long addr, unsigned int fsr,unsigned int flags, struct task_struct *tsk){struct vm_area_struct *vma;int fault;vma = find_vma(mm, addr);--------------通过addr在查找vma,如果找不到则返回VM_FAULT_BADMAP错误。fault = VM_FAULT_BADMAP;if (unlikely(!vma))goto out;--------------------------返回VM_FAULT_BADMAP错误类型if (unlikely(vma->vm_start > addr))goto check_stack;/** Ok, we have a good vm_area for this* memory access, so we can handle it.*/good_area:if (access_error(fsr, vma)) {---------判断当前vma是否可写或者可执行,如果否则返回VM_FAULT_BADACCESS错误。fault = VM_FAULT_BADACCESS;goto out;}return handle_mm_fault(mm, vma, addr & PAGE_MASK, flags);check_stack:/* Don't allow expansion below FIRST_USER_ADDRESS */if (vma->vm_flags & VM_GROWSDOWN &&addr >= FIRST_USER_ADDRESS && !expand_stack(vma, addr))goto good_area;out:return fault;}/** By the time we get here, we already hold the mm semaphore** The mmap_sem may have been released depending on flags and our* return value. See filemap_fault() and __lock_page_or_retry().*/inthandle_mm_fault(struct mm_struct *mm, struct vm_area_struct *vma,unsigned long address, unsigned int flags){int ret;__set_current_state(TASK_RUNNING);count_vm_event(PGFAULT);mem_cgroup_count_vm_event(mm, PGFAULT);/* do counter updates before entering really critical section. */check_sync_rss_stat(current);/** Enable the memcg OOM handling for faults triggered in user* space. Kernel faults are handled more gracefully.*/if (flags & FAULT_FLAG_USER)mem_cgroup_oom_enable();ret = __handle_mm_fault(mm, vma, address, flags);if (flags & FAULT_FLAG_USER) {mem_cgroup_oom_disable();/** The task may have entered a memcg OOM situation but* if the allocation error was handled gracefully (no* VM_FAULT_OOM), there is no need to kill anything.* Just clean up the OOM state peacefully.*/if (task_in_memcg_oom(current) && !(ret & VM_FAULT_OOM))mem_cgroup_oom_synchronize(false);}return ret;}/** By the time we get here, we already hold the mm semaphore** The mmap_sem may have been released depending on flags and our* return value. See filemap_fault() and __lock_page_or_retry().*/static int __handle_mm_fault(struct mm_struct *mm, struct vm_area_struct *vma,unsigned long address, unsigned int flags){pgd_t *pgd;pud_t *pud;pmd_t *pmd;pte_t *pte;if (unlikely(is_vm_hugetlb_page(vma)))return hugetlb_fault(mm, vma, address, flags);pgd = pgd_offset(mm, address);------------------------------------获取当前address在当前进程页表项PGD页面目录项。pud = pud_alloc(mm, pgd, address);--------------------------------获取当前address在当前进程对应PUD页表目录项。if (!pud)return VM_FAULT_OOM;pmd = pmd_alloc(mm, pud, address);--------------------------------找到当前地址的PMD页表目录项if (!pmd)return VM_FAULT_OOM;if (pmd_none(*pmd) && transparent_hugepage_enabled(vma)) {int ret = VM_FAULT_FALLBACK;if (!vma->vm_ops)ret = do_huge_pmd_anonymous_page(mm, vma, address,pmd, flags);if (!(ret & VM_FAULT_FALLBACK))return ret;} else {pmd_t orig_pmd = *pmd;int ret;barrier();if (pmd_trans_huge(orig_pmd)) {unsigned int dirty = flags & FAULT_FLAG_WRITE;/** If the pmd is splitting, return and retry the* the fault. Alternative: wait until the split* is done, and goto retry.*/if (pmd_trans_splitting(orig_pmd))return 0;if (pmd_protnone(orig_pmd))return do_huge_pmd_numa_page(mm, vma, address,orig_pmd, pmd);if (dirty && !pmd_write(orig_pmd)) {ret = do_huge_pmd_wp_page(mm, vma, address, pmd,orig_pmd);if (!(ret & VM_FAULT_FALLBACK))return ret;} else {huge_pmd_set_accessed(mm, vma, address, pmd,orig_pmd, dirty);return 0;}}}/** Use __pte_alloc instead of pte_alloc_map, because we can't* run pte_offset_map on the pmd, if an huge pmd could* materialize from under us from a different thread.*/if (unlikely(pmd_none(*pmd)) &&unlikely(__pte_alloc(mm, vma, pmd, address)))return VM_FAULT_OOM;/* if an huge pmd materialized from under us just retry later */if (unlikely(pmd_trans_huge(*pmd)))return 0;/** A regular pmd is established and it can't morph into a huge pmd* from under us anymore at this point because we hold the mmap_sem* read mode and khugepaged takes it in write mode. So now it's* safe to run pte_offset_map().*/pte = pte_offset_map(pmd, address);-------------------------------根据address从pmd中获取pte指针return handle_pte_fault(mm, vma, address, pte, pmd, flags);}

handle_pte_fault对各种缺页异常进行了区分,然后进行处理。

有几个关键点是区分一场类型的要点:

| 各种场景 | 缺页中断类型 | 处理函数 | ||

|---|---|---|---|---|

| 页不在内存中 | pte内容为空 | 有vm_ops | 文件映射缺页中断 | do_fault |

| 没有vm_ops | 匿名页面缺页中断 | do_anonymous_page | ||

| pte内容存在 | 页被交换到swap分区 | do_swap_page | ||

| 页在内存中 | 写时复制 | do_wp_page |

下面就来看一下流程:

/** These routines also need to handle stuff like marking pages dirty* and/or accessed for architectures that don't do it in hardware (most* RISC architectures). The early dirtying is also good on the i386.** There is also a hook called "update_mmu_cache()" that architectures* with external mmu caches can use to update those (ie the Sparc or* PowerPC hashed page tables that act as extended TLBs).** We enter with non-exclusive mmap_sem (to exclude vma changes,* but allow concurrent faults), and pte mapped but not yet locked.* We return with pte unmapped and unlocked.** The mmap_sem may have been released depending on flags and our* return value. See filemap_fault() and __lock_page_or_retry().*/staticinthandle_pte_fault(struct mm_struct *mm,struct vm_area_struct *vma, unsigned long address,pte_t *pte, pmd_t *pmd, unsigned int flags){pte_t entry;spinlock_t *ptl;/** some architectures can have larger ptes than wordsize,* e.g.ppc44x-defconfig has CONFIG_PTE_64BIT=y and CONFIG_32BIT=y,* so READ_ONCE or ACCESS_ONCE cannot guarantee atomic accesses.* The code below just needs a consistent view for the ifs and* we later double check anyway with the ptl lock held. So here* a barrier will do.*/entry = *pte;barrier();if (!pte_present(entry)) {------------------------------------------pte页表项中的L_PTE_PRESENT位没有置位,说明pte对应的物理页面不存在if (pte_none(entry)) {------------------------------------------pte页表项内容为空,同时pte对应物理页面也不存在if (vma->vm_ops) {if (likely(vma->vm_ops->fault))return do_fault(mm, vma, address, pte,--------------vm_ops操作函数fault存在,则是文件映射页面异常中断pmd, flags, entry);}return do_anonymous_page(mm, vma, address,------------------反之,vm_ops操作函数fault不存在,则是匿名页面异常中断pte, pmd, flags);}return do_swap_page(mm, vma, address,---------------------------pte对应的物理页面不存在,但是pte页表项不为空,说明该页被交换到swap分区了pte, pmd, flags, entry);}======================================下面都是物理页面存在的情况===========================================if (pte_protnone(entry))return do_numa_page(mm, vma, address, entry, pte, pmd);ptl = pte_lockptr(mm, pmd);spin_lock(ptl);if (unlikely(!pte_same(*pte, entry)))goto unlock;if (flags & FAULT_FLAG_WRITE) {if (!pte_write(entry))------------------------------------------对只读属性的页面产生写异常,触发写时复制缺页中断return do_wp_page(mm, vma, address,pte, pmd, ptl, entry);entry = pte_mkdirty(entry);}entry = pte_mkyoung(entry);if (ptep_set_access_flags(vma, address, pte, entry, flags & FAULT_FLAG_WRITE)) {update_mmu_cache(vma, address, pte);-----------------------------pte内容发生变化,需要把新的内容写入pte页表项中,并且刷新TLB和cache。} else {/** This is needed only for protection faults but the arch code* is not yet telling us if this is a protection fault or not.* This still avoids useless tlb flushes for .text page faults* with threads.*/if (flags & FAULT_FLAG_WRITE)flush_tlb_fix_spurious_fault(vma, address);}unlock:pte_unmap_unlock(pte, ptl);return 0;}

至此已经对缺页中断主分支进行了分析,下面几章节着重介绍三种类型的缺页中断:匿名页面、文件页面和写时复制。

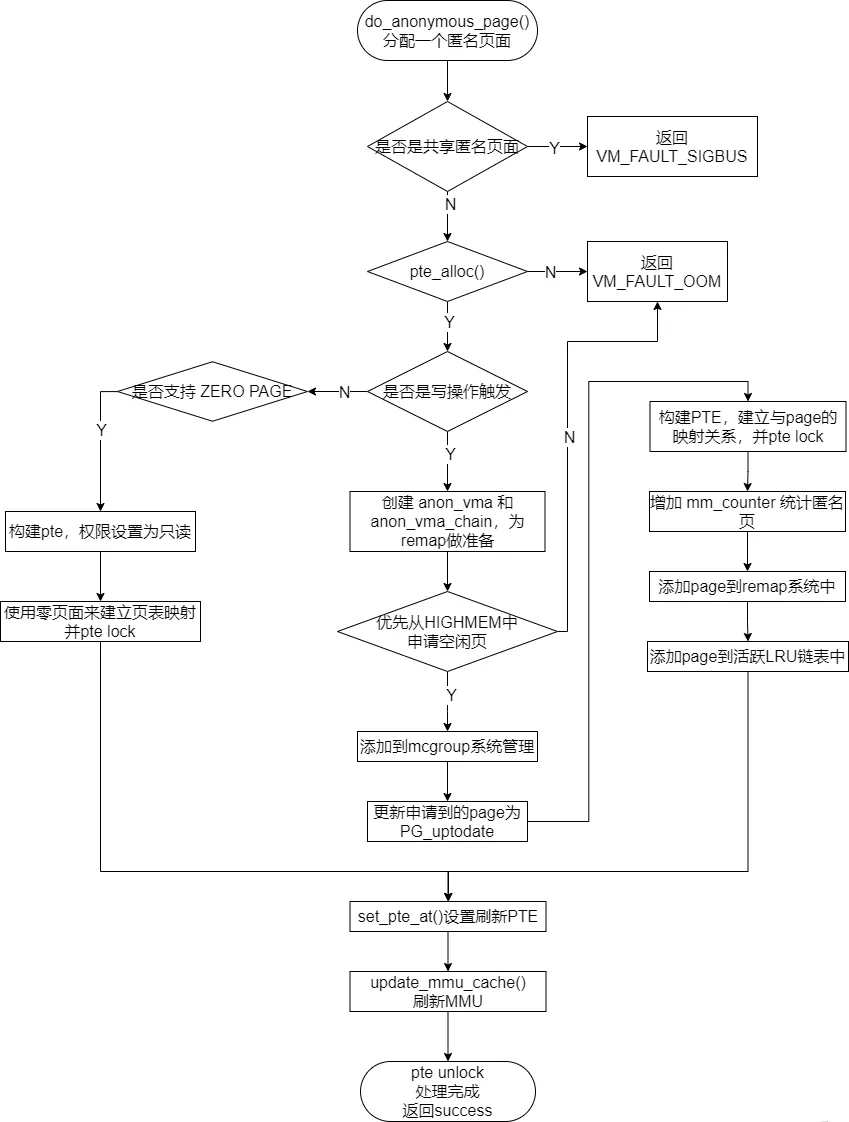

三、匿名页面缺页中断

匿名页面是相对于文件映射页面的,Linux中将所有没有关联到文件映射的页面成为匿名页面。其核心处理函数为do_anonymous_page()。

/** We enter with non-exclusive mmap_sem (to exclude vma changes,* but allow concurrent faults), and pte mapped but not yet locked.* We return with mmap_sem still held, but pte unmapped and unlocked.*/staticintdo_anonymous_page(struct mm_struct *mm, struct vm_area_struct *vma,unsigned long address, pte_t *page_table, pmd_t *pmd,unsigned int flags){struct mem_cgroup *memcg;struct page *page;spinlock_t *ptl;pte_t entry;pte_unmap(page_table);/* Check if we need to add a guard page to the stack */if (check_stack_guard_page(vma, address) < 0)return VM_FAULT_SIGSEGV;/* Use the zero-page for reads */if (!(flags & FAULT_FLAG_WRITE) && !mm_forbids_zeropage(mm)) {--------------如果是分配只读属性的页面,使用一个zeroed的全局页面empty_zero_pageentry = pte_mkspecial(pfn_pte(my_zero_pfn(address),vma->vm_page_prot));page_table = pte_offset_map_lock(mm, pmd, address, &ptl);if (!pte_none(*page_table))goto unlock;goto setpte;------------------------------------------------------------跳转到setpte设置硬件pte表项,把新的PTE entry设置到硬件页表中}/* Allocate our own private page. */if (unlikely(anon_vma_prepare(vma)))goto oom;page = alloc_zeroed_user_highpage_movable(vma, address);-------------------如果页面是可写的,分配掩码是__GFP_MOVABLE|__GFP_WAIT|__GFP_IO|__GFP_FS|__GFP_HARDWALL|__GFP_HIGHMEM。最终调用alloc_pages,优先使用高端内存。if (!page)goto oom;/** The memory barrier inside __SetPageUptodate makes sure that* preceeding stores to the page contents become visible before* the set_pte_at() write.*/__SetPageUptodate(page);if (mem_cgroup_try_charge(page, mm, GFP_KERNEL, &memcg))goto oom_free_page;entry = mk_pte(page, vma->vm_page_prot);if (vma->vm_flags & VM_WRITE)entry = pte_mkwrite(pte_mkdirty(entry));-------------------------------生成一个新的PTE Entrypage_table = pte_offset_map_lock(mm, pmd, address, &ptl);if (!pte_none(*page_table))goto release;inc_mm_counter_fast(mm, MM_ANONPAGES);------------------------------------增加系统中匿名页面统计计数,计数类型是MM_ANONPAGESpage_add_new_anon_rmap(page, vma, address);-------------------------------将匿名页面添加到RMAP系统中mem_cgroup_commit_charge(page, memcg, false);lru_cache_add_active_or_unevictable(page, vma);---------------------------将匿名页面添加到LRU链表中setpte:set_pte_at(mm, address, page_table, entry);-------------------------------将entry设置到PTE硬件中/* No need to invalidate - it was non-present before */update_mmu_cache(vma, address, page_table);unlock:pte_unmap_unlock(page_table, ptl);return 0;release:mem_cgroup_cancel_charge(page, memcg);page_cache_release(page);goto unlock;oom_free_page:page_cache_release(page);oom:return VM_FAULT_OOM;}

四、文件映射缺页中断

文件映射缺页中断又分为三种:

flags中不包含FAULT_FLAG_WRITE,说明是只读异常,调用do_read_fault()

VMA的vm_flags没有定义VM_SHARED,说明这是一个私有文件映射,发生了写时复制COW,调用do_cow_fault()

其余情况则说明是共享文件映射缺页异常,调用do_shared_fault()

/** We enter with non-exclusive mmap_sem (to exclude vma changes,* but allow concurrent faults).* The mmap_sem may have been released depending on flags and our* return value. See filemap_fault() and __lock_page_or_retry().*/staticintdo_fault(struct mm_struct *mm, struct vm_area_struct *vma,unsigned long address, pte_t *page_table, pmd_t *pmd,unsigned int flags, pte_t orig_pte){pgoff_t pgoff = (((address & PAGE_MASK)- vma->vm_start) >> PAGE_SHIFT) + vma->vm_pgoff;pte_unmap(page_table);if (!(flags & FAULT_FLAG_WRITE))return do_read_fault(mm, vma, address, pmd, pgoff, flags,-------------------------只读异常orig_pte);if (!(vma->vm_flags & VM_SHARED))return do_cow_fault(mm, vma, address, pmd, pgoff, flags,--------------------------写时复制异常orig_pte);return do_shared_fault(mm, vma, address, pmd, pgoff, flags, orig_pte);----------------共享映射异常}

4.1 只读文件映射缺页异常

handle_read_fault()处理只读异常FAULT_FLAG_WRITE类型的缺页异常。

staticintdo_read_fault(struct mm_struct *mm, struct vm_area_struct *vma,unsigned long address, pmd_t *pmd,pgoff_t pgoff, unsigned int flags, pte_t orig_pte){struct page *fault_page;spinlock_t *ptl;pte_t *pte;int ret = 0;/** Let's call ->map_pages() first and use ->fault() as fallback* if page by the offset is not ready to be mapped (cold cache or* something).*/if (vma->vm_ops->map_pages && fault_around_bytes >> PAGE_SHIFT > 1) {------static unsigned long fault_around_bytes __read_mostly =rounddown_pow_of_two(65536);pte = pte_offset_map_lock(mm, pmd, address, &ptl); do_fault_around(vma, address, pte, pgoff, flags);----------------------围绕在缺页异常地址周围提前映射尽可能多的页面,提前建立进程地址空间和page cache的映射关系有利于减少发生缺页终端的次数。这里只是和现存的page cache提前建立映射关系,而不会去创建page cache。if (!pte_same(*pte, orig_pte))goto unlock_out;pte_unmap_unlock(pte, ptl);}ret = __do_fault(vma, address, pgoff, flags, NULL, &fault_page);-----------创建page cache的页面实际操作if (unlikely(ret & (VM_FAULT_ERROR | VM_FAULT_NOPAGE | VM_FAULT_RETRY)))return ret;pte = pte_offset_map_lock(mm, pmd, address, &ptl);if (unlikely(!pte_same(*pte, orig_pte))) {pte_unmap_unlock(pte, ptl);unlock_page(fault_page);page_cache_release(fault_page);return ret;}do_set_pte(vma, address, fault_page, pte, false, false);-------------------生成新的PTE Entry设置到硬件页表项中unlock_page(fault_page);unlock_out:pte_unmap_unlock(pte, ptl);return ret;}

4.2 私有文件写时复制COW异常

handle_cow_fault()0处理私有映射且发生写时复制COW的情况。

staticintdo_cow_fault(struct mm_struct *mm, struct vm_area_struct *vma,unsigned long address, pmd_t *pmd,pgoff_t pgoff, unsigned int flags, pte_t orig_pte){struct page *fault_page, *new_page;struct mem_cgroup *memcg;spinlock_t *ptl;pte_t *pte;int ret;if (unlikely(anon_vma_prepare(vma)))return VM_FAULT_OOM;new_page = alloc_page_vma(GFP_HIGHUSER_MOVABLE, vma, address);----------------优先从高端内存分配可移动页面if (!new_page)return VM_FAULT_OOM;if (mem_cgroup_try_charge(new_page, mm, GFP_KERNEL, &memcg)) {page_cache_release(new_page);return VM_FAULT_OOM;}ret = __do_fault(vma, address, pgoff, flags, new_page, &fault_page);----------利用vma->vm_ops->fault()读取文件内容到fault_page中。if (unlikely(ret & (VM_FAULT_ERROR | VM_FAULT_NOPAGE | VM_FAULT_RETRY)))goto uncharge_out;if (fault_page)copy_user_highpage(new_page, fault_page, address, vma);-------------------将fault_page页面内容复制到新分配页面new_page中。__SetPageUptodate(new_page);pte = pte_offset_map_lock(mm, pmd, address, &ptl);if (unlikely(!pte_same(*pte, orig_pte))) {------------------------------------如果pte和orig_pte不一致,说明中间有人修改了pte,那么释放fault_page和new_page页面并退出。pte_unmap_unlock(pte, ptl);if (fault_page) {unlock_page(fault_page);page_cache_release(fault_page);} else {/** The fault handler has no page to lock, so it holds* i_mmap_lock for read to protect against truncate.*/i_mmap_unlock_read(vma->vm_file->f_mapping);}goto uncharge_out;}do_set_pte(vma, address, new_page, pte, true, true);-------------------------将PTE Entry设置到PTE硬件页表项pte中。mem_cgroup_commit_charge(new_page, memcg, false);lru_cache_add_active_or_unevictable(new_page, vma);--------------------------将新分配的new_page加入到LRU链表中。pte_unmap_unlock(pte, ptl);if (fault_page) {unlock_page(fault_page);page_cache_release(fault_page);-------------------------------------------释放fault_page页面} else {/** The fault handler has no page to lock, so it holds* i_mmap_lock for read to protect against truncate.*/i_mmap_unlock_read(vma->vm_file->f_mapping);}return ret;uncharge_out:mem_cgroup_cancel_charge(new_page, memcg);page_cache_release(new_page);return ret;}

4.3 共享文件缺页异常

do_shared_fault()处理共享文件映射中发生缺页异常的情况。

staticintdo_shared_fault(struct mm_struct *mm, struct vm_area_struct *vma,unsigned long address, pmd_t *pmd,pgoff_t pgoff, unsigned int flags, pte_t orig_pte){struct page *fault_page;struct address_space *mapping;spinlock_t *ptl;pte_t *pte;int dirtied = 0;int ret, tmp;ret = __do_fault(vma, address, pgoff, flags, NULL, &fault_page);-----------------读取文件到fault_page中if (unlikely(ret & (VM_FAULT_ERROR | VM_FAULT_NOPAGE | VM_FAULT_RETRY)))return ret;/** Check if the backing address space wants to know that the page is* about to become writable*/if (vma->vm_ops->page_mkwrite) {unlock_page(fault_page);tmp = do_page_mkwrite(vma, fault_page, address);-----------------------------通知进程地址空间,fault_page将变成可写的,那么进程可能需要等待这个page的内容回写成功。if (unlikely(!tmp ||(tmp & (VM_FAULT_ERROR | VM_FAULT_NOPAGE)))) {page_cache_release(fault_page);return tmp;}}pte = pte_offset_map_lock(mm, pmd, address, &ptl);if (unlikely(!pte_same(*pte, orig_pte))) {---------------------------------------判断该异常地址对应的硬件页表项pte内容与之前的orig_pte是否一致。不一致,就需要释放fault_page。pte_unmap_unlock(pte, ptl);unlock_page(fault_page);page_cache_release(fault_page);return ret;}do_set_pte(vma, address, fault_page, pte, true, false);--------------------------利用fault_page新生成一个PTE Entry并设置到页表项pte中pte_unmap_unlock(pte, ptl);if (set_page_dirty(fault_page))--------------------------------------------------设置页面为脏dirtied = 1;/** Take a local copy of the address_space - page.mapping may be zeroed* by truncate after unlock_page(). The address_space itself remains* pinned by vma->vm_file's reference. We rely on unlock_page()'s* release semantics to prevent the compiler from undoing this copying.*/mapping = fault_page->mapping;unlock_page(fault_page);if ((dirtied || vma->vm_ops->page_mkwrite) && mapping) {/** Some device drivers do not set page.mapping but still* dirty their pages*/balance_dirty_pages_ratelimited(mapping);------------------------------------每设置一页为dirty,检查是否需要回写;如需要则回写一部分页面}if (!vma->vm_ops->page_mkwrite)file_update_time(vma->vm_file);return ret;}

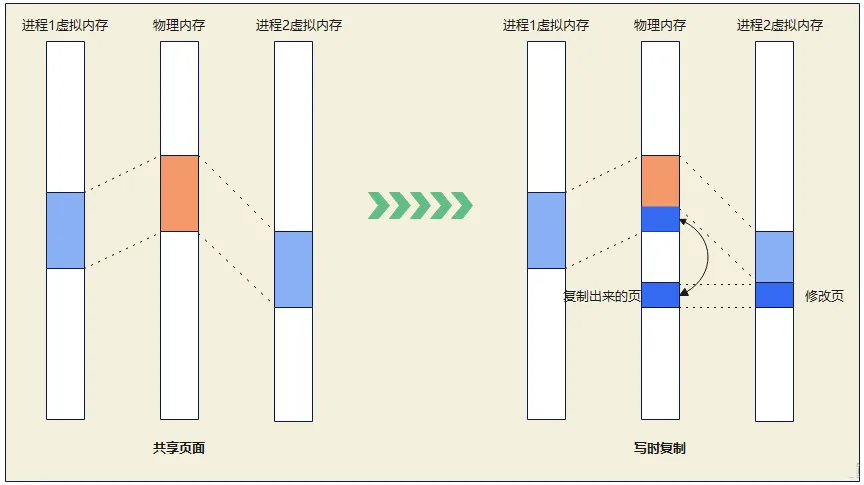

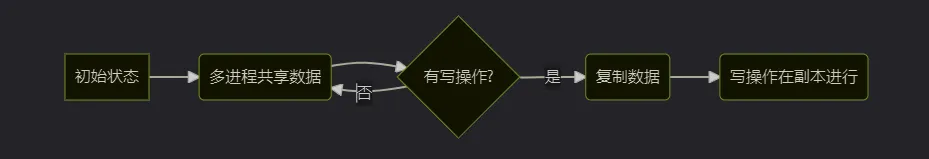

五、写时复制

do_wp_page()函数处理那些用户试图修改pte页表没有可写属性的页面,它新分配一个页面并且复制旧页面内容到新的页面中。

/** This routine handles present pages, when users try to write* to a shared page. It is done by copying the page to a new address* and decrementing the shared-page counter for the old page.*/staticintdo_wp_page(struct mm_struct *mm, struct vm_area_struct *vma,unsigned long address, pte_t *page_table, pmd_t *pmd,spinlock_t *ptl, pte_t orig_pte)__releases(ptl){struct page *old_page, *new_page = NULL;pte_t entry;int ret = 0;int page_mkwrite = 0;bool dirty_shared = false;unsigned long mmun_start = 0; /* For mmu_notifiers */unsigned long mmun_end = 0; /* For mmu_notifiers */struct mem_cgroup *memcg;old_page = vm_normal_page(vma, address, orig_pte);--------------------------查找缺页异常地址address对应页面的struct page数据结构,返回normal mapping页面。if (!old_page) {------------------------------------------------------------如果返回old_page为NULL,说明这时一个special mapping页面。/** VM_MIXEDMAP !pfn_valid() case, or VM_SOFTDIRTY clear on a* VM_PFNMAP VMA.** We should not cow pages in a shared writeable mapping.* Just mark the pages writable as we can't do any dirty* accounting on raw pfn maps.*/if ((vma->vm_flags & (VM_WRITE|VM_SHARED)) ==(VM_WRITE|VM_SHARED))goto reuse;goto gotten;}/** Take out anonymous pages first, anonymous shared vmas are* not dirty accountable.*/if (PageAnon(old_page) && !PageKsm(old_page)) {-----------------------------针对匿名非KSM页面之外的情况进行进行处理,if (!trylock_page(old_page)) {page_cache_get(old_page);pte_unmap_unlock(page_table, ptl);lock_page(old_page);page_table = pte_offset_map_lock(mm, pmd, address,&ptl);if (!pte_same(*page_table, orig_pte)) {unlock_page(old_page);goto unlock;}page_cache_release(old_page);}if (reuse_swap_page(old_page)) {/** The page is all ours. Move it to our anon_vma so* the rmap code will not search our parent or siblings.* Protected against the rmap code by the page lock.*/page_move_anon_rmap(old_page, vma, address);unlock_page(old_page);goto reuse;}unlock_page(old_page);} else if (unlikely((vma->vm_flags & (VM_WRITE|VM_SHARED)) ==---------------处理匿名非KSM页面之外的情况(VM_WRITE|VM_SHARED))) {page_cache_get(old_page);/** Only catch write-faults on shared writable pages,* read-only shared pages can get COWed by* get_user_pages(.write=1, .force=1).*/if (vma->vm_ops && vma->vm_ops->page_mkwrite) {int tmp;pte_unmap_unlock(page_table, ptl);tmp = do_page_mkwrite(vma, old_page, address);if (unlikely(!tmp || (tmp &(VM_FAULT_ERROR | VM_FAULT_NOPAGE)))) {page_cache_release(old_page);return tmp;}/** Since we dropped the lock we need to revalidate* the PTE as someone else may have changed it. If* they did, we just return, as we can count on the* MMU to tell us if they didn't also make it writable.*/page_table = pte_offset_map_lock(mm, pmd, address,&ptl);if (!pte_same(*page_table, orig_pte)) {unlock_page(old_page);goto unlock;}page_mkwrite = 1;}dirty_shared = true;reuse:/** Clear the pages cpupid information as the existing* information potentially belongs to a now completely* unrelated process.*/if (old_page)page_cpupid_xchg_last(old_page, (1 << LAST_CPUPID_SHIFT) - 1);flush_cache_page(vma, address, pte_pfn(orig_pte));entry = pte_mkyoung(orig_pte);entry = maybe_mkwrite(pte_mkdirty(entry), vma);if (ptep_set_access_flags(vma, address, page_table, entry,1))update_mmu_cache(vma, address, page_table);pte_unmap_unlock(page_table, ptl);ret |= VM_FAULT_WRITE;if (dirty_shared) {struct address_space *mapping;int dirtied;if (!page_mkwrite)lock_page(old_page);dirtied = set_page_dirty(old_page);VM_BUG_ON_PAGE(PageAnon(old_page), old_page);mapping = old_page->mapping;unlock_page(old_page);page_cache_release(old_page);if ((dirtied || page_mkwrite) && mapping) {/** Some device drivers do not set page.mapping* but still dirty their pages*/balance_dirty_pages_ratelimited(mapping);}if (!page_mkwrite)file_update_time(vma->vm_file);}return ret;}/** Ok, we need to copy. Oh, well..*/page_cache_get(old_page);gotten:------------------------------------------------------------------------表示需要新建一个页面,也就是写时复制。pte_unmap_unlock(page_table, ptl);if (unlikely(anon_vma_prepare(vma)))goto oom;if (is_zero_pfn(pte_pfn(orig_pte))) {new_page = alloc_zeroed_user_highpage_movable(vma, address);----------分配高端、可移动、零页面if (!new_page)goto oom;} else {new_page = alloc_page_vma(GFP_HIGHUSER_MOVABLE, vma, address);--------分配高端、可移动页面if (!new_page)goto oom;cow_user_page(new_page, old_page, address, vma);}__SetPageUptodate(new_page);if (mem_cgroup_try_charge(new_page, mm, GFP_KERNEL, &memcg))goto oom_free_new;mmun_start = address & PAGE_MASK;mmun_end = mmun_start + PAGE_SIZE;mmu_notifier_invalidate_range_start(mm, mmun_start, mmun_end);/** Re-check the pte - we dropped the lock*/page_table = pte_offset_map_lock(mm, pmd, address, &ptl);if (likely(pte_same(*page_table, orig_pte))) {if (old_page) {if (!PageAnon(old_page)) {dec_mm_counter_fast(mm, MM_FILEPAGES);inc_mm_counter_fast(mm, MM_ANONPAGES);}} elseinc_mm_counter_fast(mm, MM_ANONPAGES);flush_cache_page(vma, address, pte_pfn(orig_pte));entry = mk_pte(new_page, vma->vm_page_prot);---------------------------利用new_page和vma生成PTE Entryentry = maybe_mkwrite(pte_mkdirty(entry), vma);/** Clear the pte entry and flush it first, before updating the* pte with the new entry. This will avoid a race condition* seen in the presence of one thread doing SMC and another* thread doing COW.*/ptep_clear_flush_notify(vma, address, page_table);page_add_new_anon_rmap(new_page, vma, address);------------------------把new_page添加到RMAP反向映射机制,设置页面计数_mapcount为0。mem_cgroup_commit_charge(new_page, memcg, false);lru_cache_add_active_or_unevictable(new_page, vma);--------------------把new_page添加到活跃的LRU链表中/** We call the notify macro here because, when using secondary* mmu page tables (such as kvm shadow page tables), we want the* new page to be mapped directly into the secondary page table.*/set_pte_at_notify(mm, address, page_table, entry);update_mmu_cache(vma, address, page_table);if (old_page) {/** Only after switching the pte to the new page may* we remove the mapcount here. Otherwise another* process may come and find the rmap count decremented* before the pte is switched to the new page, and* "reuse" the old page writing into it while our pte* here still points into it and can be read by other* threads.** The critical issue is to order this* page_remove_rmap with the ptp_clear_flush above.* Those stores are ordered by (if nothing else,)* the barrier present in the atomic_add_negative* in page_remove_rmap.** Then the TLB flush in ptep_clear_flush ensures that* no process can access the old page before the* decremented mapcount is visible. And the old page* cannot be reused until after the decremented* mapcount is visible. So transitively, TLBs to* old page will be flushed before it can be reused.*/page_remove_rmap(old_page);--------------------------------------_mapcount计数减1}/* Free the old page.. */new_page = old_page;ret |= VM_FAULT_WRITE;} elsemem_cgroup_cancel_charge(new_page, memcg);if (new_page)page_cache_release(new_page);----------------------------------------释放new_page,这里new_page==old_page。unlock:pte_unmap_unlock(page_table, ptl);if (mmun_end > mmun_start)mmu_notifier_invalidate_range_end(mm, mmun_start, mmun_end);if (old_page) {/** Don't let another task, with possibly unlocked vma,* keep the mlocked page.*/if ((ret & VM_FAULT_WRITE) && (vma->vm_flags & VM_LOCKED)) {lock_page(old_page); /* LRU manipulation */munlock_vma_page(old_page);unlock_page(old_page);}page_cache_release(old_page);}return ret;oom_free_new:page_cache_release(new_page);oom:if (old_page)page_cache_release(old_page);return VM_FAULT_OOM;}

原作者:ArnoldLu

原文链接:

https://www.cnblogs.com/arnoldlu/p/8335475.html