Linux内存管理:数据结构和API

- 2026-03-27 13:15:55

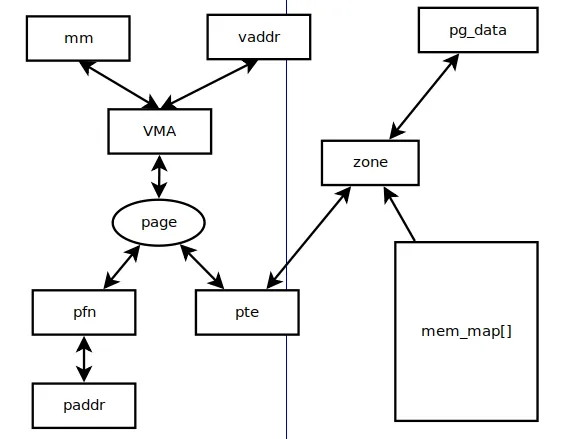

在大部分Linux系统中,内存设备的初始化一般是在BIOS或bootloader中,然后把DDR的大小传递给Linux内核。因此从Linux内核角度来看DDR,其实就是一段物理内存空间。

1.1 由mm数据结构和虚拟地址vaddr找到对应的VMA

extern struct vm_area_struct * find_vma(struct mm_struct * mm, unsigned long addr);extern struct vm_area_struct * find_vma_prev(struct mm_struct * mm, unsigned long addr,struct vm_area_struct **pprev);static inline struct vm_area_struct * find_vma_intersection(struct mm_struct * mm, unsigned long start_addr, unsigned long end_addr){struct vm_area_struct * vma = find_vma(mm,start_addr);if (vma && end_addr <= vma->vm_start)vma = NULL;return vma;}

由VMA得出mm数据结构,struct vm_area_struct数据结构有一个指针指向struct mm_struct。

struct mm_struct {struct vm_area_struct *mmap; /* list of VMAs */struct rb_root mm_rb;u32 vmacache_seqnum; /* per-thread vmacache */...};struct vm_area_struct {/* The first cache line has the info for VMA tree walking. */unsigned long vm_start; /* Our start address within vm_mm. */unsigned long vm_end; /* The first byte after our end addresswithin vm_mm. */...struct rb_node vm_rb;...struct mm_struct *vm_mm; /* The address space we belong to. */...}

find_vma()根据mm_struct和addr找到vma。

find_vma()首先在当前进程current->vmacache[]中查找addr对应的vma;如果未找到,则遍历mm->mm_rb,通过rb_node找到对应的vma,然后判断是否和addr吻合。

struct vm_area_struct *find_vma(struct mm_struct *mm, unsigned long addr){struct rb_node *rb_node;struct vm_area_struct *vma;/* Check the cache first. */vma = vmacache_find(mm, addr);--------------------------------在task_struct->vmacache[]中查找,看能否命中。if (likely(vma))return vma;rb_node = mm->mm_rb.rb_node;----------------------------------找到当前mm的第一个rb_node节点。vma = NULL;while (rb_node) {---------------------------------------------遍历当前mm空间的所有rb_node。struct vm_area_struct *tmp;tmp = rb_entry(rb_node, struct vm_area_struct, vm_rb);----通过rb_node找到对应的vmaif (tmp->vm_end > addr) {vma = tmp;if (tmp->vm_start <= addr)break;--------------------------------------------找到合适的vma,退出。rb_node = rb_node->rb_left;} elserb_node = rb_node->rb_right;}if (vma)vmacache_update(addr, vma);-------------------------------将当前vma放到vmacache[]中。return vma;}

1.2 由page和VMA找到虚拟地址vaddr

vma_address()只针对匿名页面:

inlineunsignedlongvma_address(struct page *page, struct vm_area_struct *vma){unsigned long address = __vma_address(page, vma);/* page should be within @vma mapping range */VM_BUG_ON_VMA(address < vma->vm_start || address >= vma->vm_end, vma);return address;}static inline unsigned long__vma_address(struct page *page, struct vm_area_struct *vma){pgoff_t pgoff = page_to_pgoff(page);return vma->vm_start + ((pgoff - vma->vm_pgoff) << PAGE_SHIFT);-------------根据page->index计算当前vma的偏移地址。}staticinlinepgoff_tpage_to_pgoff(struct page *page){if (unlikely(PageHeadHuge(page)))return page->index << compound_order(page);elsereturn page->index << (PAGE_CACHE_SHIFT - PAGE_SHIFT);------------------page->index表示在一个vma中page的index。}

1.3 由page找到所有映射的VMA

通过反向映射rmap系统rmap_walk()来实现,对于匿名页面来说是rmap_walk_anon()。

staticintrmap_walk_anon(struct page *page, struct rmap_walk_control *rwc){struct anon_vma *anon_vma;pgoff_t pgoff;struct anon_vma_chain *avc;int ret = SWAP_AGAIN;anon_vma = rmap_walk_anon_lock(page, rwc);-----------------------------------由page->mapping找到anon_vma数据结构。if (!anon_vma)return ret;pgoff = page_to_pgoff(page);anon_vma_interval_tree_foreach(avc, &anon_vma->rb_root, pgoff, pgoff) {-----遍历anon_vma->rb_root红黑树,取出avc数据结构。struct vm_area_struct *vma = avc->vma;----------------------------------每个avc数据结构指向每个映射的vmaunsigned long address = vma_address(page, vma);if (rwc->invalid_vma && rwc->invalid_vma(vma, rwc->arg))continue;ret = rwc->rmap_one(page, vma, address, rwc->arg);if (ret != SWAP_AGAIN)break;if (rwc->done && rwc->done(page))break;}anon_vma_unlock_read(anon_vma);return ret;}

由vma和虚拟地址vaddr找出相应的page数据结构,可以通过follow_page()。

static inline struct page *follow_page(struct vm_area_struct *vma,unsigned long address, unsigned int foll_flags)

follow_page()由虚拟地址vaddr通过查询页表找出pte。

由pte找出页帧号pfn,然后在mem_map[]找到相应的struct page结构。

1.4 page和pfn之间的互换

page_to_pfn()和pfn_to_page()定义在linux/include/asm-generic/memory_mode.h中定义。

具体的实现方式跟memory models有关,这里定义了CONFIG_FLATMEM。

#define page_to_pfn __page_to_pfn#define pfn_to_page __pfn_to_page#define __pfn_to_page(pfn) (mem_map + ((pfn) - ARCH_PFN_OFFSET))-----------------------------pfn减去ARCH_PFN_OFFSET得到page相对于mem_map的偏移。#define __page_to_pfn(page) ((unsigned long)((page) - mem_map) + \ARCH_PFN_OFFSET)---------------------------------------------------------------page到mem_map的偏移加上ARCH_PFN_OFFSET得到当前page对应的pfn号。

在linux内核中,所有的物理内存都用struct page结构来描述,这些对象以数组形式存放,而这个数组的地址就是mem_map。

内核以节点node为单位,每个node下的物理内存统一管理,也就是说在表示内存node的描述类型struct pglist_data中,有node_mem_map这个成员。

也就是说,每个内存节点node下,成员node_mem_map是此node下所有内存以struct page描述后,所有这些对象的基地址,这些对象以数组形式存放。

1.5 pfn/page和paddr之间的互换

物理地址paddr和pfn的互换通过位移PAGE_SHIFT可以简单地得到。

page和paddr的呼唤可以通过pfn中间来实现。

/** Convert a physical address to a Page Frame Number and back*/#define __phys_to_pfn(paddr) ((unsigned long)((paddr) >> PAGE_SHIFT))#define __pfn_to_phys(pfn) ((phys_addr_t)(pfn) << PAGE_SHIFT)/** Convert a page to/from a physical address*/#define page_to_phys(page) (__pfn_to_phys(page_to_pfn(page)))#define phys_to_page(phys) (pfn_to_page(__phys_to_pfn(phys)))

1.6 page和pte之间的互换

先由page到pfn,然后由pfn到pte,可以实现page到pte的转换。

#define pfn_pte(pfn,prot) __pte(__pfn_to_phys(pfn) | pgprot_val(prot))#define __page_to_pfn(page) ((unsigned long)((page) - mem_map) + \ARCH_PFN_OFFSET)

由pte到page,通过pte_pfn()找到对应的pfn号,再由pfn号找到对应的page。

#define pte_page(pte) pfn_to_page(pte_pfn(pte))#define pte_pfn(pte) ((pte_val(pte) & PHYS_MASK) >> PAGE_SHIFT)

1.7 zone和page之间的互换

由zone到page的转换:zone数据结构有zone->start_pfn指向zone的起始页面,然后由pfn找到page的数据结构。

由page到zone的转换:page_zone()函数返回page所属的zone,通过page->flags布局实现。

1.8 zone和pg_data之间的互换

由pg_data到zone:pg_data_t->node_zones。

由zone到pg_data:zone->zone_pgdat。

二、内存管理中常用API

内存管理错综复杂,不仅要从用户态的相关API来窥探和理解Linux内核内存是如何运作,还要总结Linux内核中常用的内存管理相关的API。

2.1 页表相关

页表相关的API可以概括为如下4类:页表查询、判断页表项的状态位、修改页表、page和pfn的关系。

//查询页表#define pgd_offset_k(addr) pgd_offset(&init_mm, addr)#define pgd_index(addr) ((addr) >> PGDIR_SHIFT)#define pgd_offset(mm, addr) ((mm)->pgd + pgd_index(addr))#define pte_index(addr) (((addr) >> PAGE_SHIFT) & (PTRS_PER_PTE - 1))#define pte_offset_kernel(pmd,addr) (pmd_page_vaddr(*(pmd)) + pte_index(addr))#define pte_offset_map(pmd,addr) (__pte_map(pmd) + pte_index(addr))#define pte_unmap(pte) __pte_unmap(pte)//判断页表项的状态位#define pte_none(pte) (!pte_val(pte))#define pte_present(pte) (pte_isset((pte), L_PTE_PRESENT))#define pte_valid(pte) (pte_isset((pte), L_PTE_VALID))#define pte_accessible(mm, pte) (mm_tlb_flush_pending(mm) ? pte_present(pte) : pte_valid(pte))#define pte_write(pte) (pte_isclear((pte), L_PTE_RDONLY))#define pte_dirty(pte) (pte_isset((pte), L_PTE_DIRTY))#define pte_young(pte) (pte_isset((pte), L_PTE_YOUNG))#define pte_exec(pte) (pte_isclear((pte), L_PTE_XN))//修改页表#define mk_pte(page,prot) pfn_pte(page_to_pfn(page), prot)staticinlinepte_tpte_wrprotect(pte_t pte){return set_pte_bit(pte, __pgprot(L_PTE_RDONLY));}staticinlinepte_tpte_mkwrite(pte_t pte){return clear_pte_bit(pte, __pgprot(L_PTE_RDONLY));}staticinlinepte_tpte_mkclean(pte_t pte){return clear_pte_bit(pte, __pgprot(L_PTE_DIRTY));}staticinlinepte_tpte_mkdirty(pte_t pte){return set_pte_bit(pte, __pgprot(L_PTE_DIRTY));}staticinlinepte_tpte_mkold(pte_t pte){return clear_pte_bit(pte, __pgprot(L_PTE_YOUNG));}staticinlinepte_tpte_mkyoung(pte_t pte){return set_pte_bit(pte, __pgprot(L_PTE_YOUNG));}staticinlinepte_tpte_mkexec(pte_t pte){return clear_pte_bit(pte, __pgprot(L_PTE_XN));}staticinlinepte_tpte_mknexec(pte_t pte){return set_pte_bit(pte, __pgprot(L_PTE_XN));}staticinlinevoidset_pte_at(struct mm_struct *mm, unsignedlong addr,pte_t *ptep, pte_t pteval){unsigned long ext = 0;if (addr < TASK_SIZE && pte_valid_user(pteval)) {if (!pte_special(pteval))__sync_icache_dcache(pteval);ext |= PTE_EXT_NG;}set_pte_ext(ptep, pteval, ext);}staticinlinepte_tclear_pte_bit(pte_t pte, pgprot_t prot){pte_val(pte) &= ~pgprot_val(prot);return pte;}staticinlinepte_tset_pte_bit(pte_t pte, pgprot_t prot){pte_val(pte) |= pgprot_val(prot);return pte;}intptep_set_access_flags(struct vm_area_struct *vma,unsigned long address, pte_t *ptep,pte_t entry, int dirty){int changed = !pte_same(*ptep, entry);if (changed) {set_pte_at(vma->vm_mm, address, ptep, entry);flush_tlb_fix_spurious_fault(vma, address);}return changed;}//page和pfn的关系#define pte_pfn(pte) ((pte_val(pte) & PHYS_MASK) >> PAGE_SHIFT)#define pfn_pte(pfn,prot) __pte(__pfn_to_phys(pfn) | pgprot_val(prot))

2.2 内存分配

内核中常用的内存分配API如下。

分配和释放页面:

#define alloc_pages(gfp_mask, order) \alloc_pages_node(numa_node_id(), gfp_mask, order)static inline struct page *__alloc_pages(gfp_t gfp_mask, unsigned int order,struct zonelist *zonelist){return __alloc_pages_nodemask(gfp_mask, order, zonelist, NULL);}unsigned long __get_free_pages(gfp_t gfp_mask, unsigned int order){struct page *page;/** __get_free_pages() returns a 32-bit address, which cannot represent* a highmem page*/VM_BUG_ON((gfp_mask & __GFP_HIGHMEM) != 0);page = alloc_pages(gfp_mask, order);if (!page)return 0;return (unsigned long) page_address(page);}voidfree_pages(unsignedlong addr, unsignedint order){if (addr != 0) {VM_BUG_ON(!virt_addr_valid((void *)addr));__free_pages(virt_to_page((void *)addr), order);}}void __free_pages(struct page *page, unsigned int order){if (put_page_testzero(page)) {if (order == 0)free_hot_cold_page(page, false);else__free_pages_ok(page, order);}}

slab分配器:

struct kmem_cache *kmem_cache_create(const char *, size_t, size_t,unsigned long,void (*)(void *));voidkmem_cache_destroy(struct kmem_cache *);void *kmem_cache_alloc(struct kmem_cache *, gfp_t flags);voidkmem_cache_free(struct kmem_cache *, void *);static __always_inline void *kmalloc(size_t size, gfp_t flags)static inline void kfree(void *p)

vmalloc相关:

externvoid *vmalloc(unsignedlong size);externvoid *vzalloc(unsignedlong size);externvoid *vmalloc_user(unsignedlong size);externvoidvfree(constvoid *addr);externvoid *vmap(struct page **pages, unsignedint count,unsigned long flags, pgprot_t prot);externvoidvunmap(constvoid *addr);

2.3 VMA操作相关

extern struct vm_area_struct * find_vma(struct mm_struct * mm, unsigned long addr);extern struct vm_area_struct * find_vma_prev(struct mm_struct * mm, unsigned long addr,struct vm_area_struct **pprev);static inline struct vm_area_struct * find_vma_intersection(struct mm_struct * mm, unsigned long start_addr, unsigned long end_addr){struct vm_area_struct * vma = find_vma(mm,start_addr);if (vma && end_addr <= vma->vm_start)vma = NULL;return vma;}staticinlineunsignedlongvma_pages(struct vm_area_struct *vma){return (vma->vm_end - vma->vm_start) >> PAGE_SHIFT;}

2.4 页面相关

内存管理的复杂之处是和页面相关的操作,内核中常用的API函数归纳如下:PG_XXX标志位操作、page引用计数操作、匿名页面和KSM页面、页面操作、页面映射、缺页中断、LRU和页面回收。

PG_XXX标志位操作:

PageXXX()SetPageXXX()ClearPageXXX()TestSetPageXXX()TestClearPageXXX()staticinlinevoidlock_page(struct page *page){might_sleep();if (!trylock_page(page))__lock_page(page);}staticinlineinttrylock_page(struct page *page){return (likely(!test_and_set_bit_lock(PG_locked, &page->flags)));}void __lock_page(struct page *page){DEFINE_WAIT_BIT(wait, &page->flags, PG_locked);__wait_on_bit_lock(page_waitqueue(page), &wait, bit_wait_io,TASK_UNINTERRUPTIBLE);}voidwait_on_page_bit(struct page *page, int bit_nr);voidwake_up_page(struct page *page, int bit)static inline void wait_on_page_locked(struct page *page)static inline void wait_on_page_writeback(struct page *page)

page引用计数操作:

staticinlinevoidget_page(struct page *page)void put_page(struct page *page);#define page_cache_get(page) get_page(page)#define page_cache_release(page) put_page(page)staticinlineintpage_count(struct page *page){return atomic_read(&compound_head(page)->_count);}staticinlineintpage_mapcount(struct page *page){VM_BUG_ON_PAGE(PageSlab(page), page);return atomic_read(&page->_mapcount) + 1;}staticinlineintpage_mapped(struct page *page){return atomic_read(&(page)->_mapcount) >= 0;}staticinlineintput_page_testzero(struct page *page){VM_BUG_ON_PAGE(atomic_read(&page->_count) == 0, page);return atomic_dec_and_test(&page->_count);}

匿名页面和KSM页面:

staticinlineintPageAnon(struct page *page){return ((unsigned long)page->mapping & PAGE_MAPPING_ANON) != 0;}staticinlineintPageKsm(struct page *page){return ((unsigned long)page->mapping & PAGE_MAPPING_FLAGS) ==(PAGE_MAPPING_ANON | PAGE_MAPPING_KSM);}struct address_space *page_mapping(struct page *page);staticinlinevoid *page_rmapping(struct page *page){return (void *)((unsigned long)page->mapping & ~PAGE_MAPPING_FLAGS);}voidpage_add_new_anon_rmap(struct page *, struct vm_area_struct *, unsignedlong);voidpage_add_file_rmap(struct page *);voidpage_remove_rmap(struct page *);

页面操作:

static inline struct page *follow_page(struct vm_area_struct *vma,unsigned long address, unsigned int foll_flags)struct page *vm_normal_page(struct vm_area_struct *vma, unsigned long addr,pte_t pte);longget_user_pages(struct task_struct *tsk, struct mm_struct *mm,unsigned long start, unsigned long nr_pages,int write, int force, struct page **pages,struct vm_area_struct **vmas);longget_user_pages_locked(struct task_struct *tsk, struct mm_struct *mm,unsigned long start, unsigned long nr_pages,int write, int force, struct page **pages,int *locked);struct page *vm_normal_page(struct vm_area_struct *vma, unsigned long addr,pte_t pte);

页面映射:

unsignedlongdo_mmap_pgoff(struct file *file, unsignedlong addr,unsigned long len, unsigned long prot, unsigned long flags,unsigned long pgoff, unsigned long *populate);intdo_munmap(struct mm_struct *, unsignedlong, size_t);intremap_pfn_range(struct vm_area_struct *, unsignedlong addr,unsigned long pfn, unsigned long size, pgprot_t);

缺页中断:

staticint __kprobes do_page_fault(unsignedlong addr, unsignedint fsr, struct pt_regs *regs)static int handle_pte_fault(struct mm_struct *mm,struct vm_area_struct *vma, unsigned long address,pte_t *pte, pmd_t *pmd, unsigned int flags)static int do_anonymous_page(struct mm_struct *mm, struct vm_area_struct *vma,unsigned long address, pte_t *page_table, pmd_t *pmd,unsigned int flags)static int do_wp_page(struct mm_struct *mm, struct vm_area_struct *vma,unsigned long address, pte_t *page_table, pmd_t *pmd,spinlock_t *ptl, pte_t orig_pte)

LRU和页面回收:

voidlru_cache_add(struct page *);#define lru_to_page(_head) (list_entry((_head)->prev, struct page, lru))boolzone_watermark_ok(struct zone *z, unsignedint order,unsigned long mark, int classzone_idx, int alloc_flags);boolzone_watermark_ok_safe(struct zone *z, unsignedint order,unsigned long mark, int classzone_idx, int alloc_flags);

Linux内存管理系列文章:

原作者:ArnoldLu

原文地址:

https://www.cnblogs.com/arnoldlu/p/8335568.html