基于Rocky Linux的K8S-1.35.0高可用集群二进制部署指南

- 2026-02-20 11:46:30

Kubernetes 集群部署指南

一、环境说明 1.1 系统与组件版本 1.2 网络规划 二、准备工作 2.1 必备工具安装 2.2 服务器信息 2.3 配置所有节点hosts文件 2.4 所有节点关闭firewalld、selinux 2.5 所有节点关闭swap分区 2.6 所有节点同步时间 2.7 所有节点配置limit 2.8 Master01节点免密钥登录其他节点 2.9 所有节点安装ipvsadm 2.10 所有节点设置ipvs开机自启动 2.11 配置所有节点内核参数 2.12 查看所有节点内核加载情况 三、证书生成 四、安装容器 4.1 配置内核参数 4.2 安装containerd 4.3 通过 systemd 启动 containerd 4.4 安装runc 4.5 安装CNI插件 4.6 安装crictl 4.7 配置 systemd cgroup 驱动 五、高可用组件安装 5.1 安装HAProxy和KeepAlived 5.2 所有Master节点配置HAProxy 5.3 配置KeepAlived 5.4 健康检查配置 5.5 所有master节点启动haproxy和keepalived 5.6 VIP测试 六、K8S和etcd证书配置 6.1 下载安装包 6.2 安装etcd 6.3 安装K8S组件 6.4 查看版本 6.5 安装证书生成工具 6.6 生成证书 七 Kubernetes系统组件配置 7.1 etcd 配置 7.2 创建 etcd 启动文件 7.3 所有节点创建相关目录 7.4 配置 Apiserver 启动文件 7.5 启动apiserver 7.6 配置kube-controller-manager 7.7 所有Master节点启动kube-controller-manager 7.8 配置kube-scheduler 7.9 TLS Bootstrapping配置 7.10 在master01上将证书复制到node节点 7.11 kubelet配置 7.12 所有k8s节点添加kube-proxy的service文件 7.13 所有k8s节点添加 kube-proxy 的配置 7.14 启动 kube-proxy 八 安装Calico 8.1 修改calico 8.2 部署 calico 九 安装CoreDNS 十 部署 Metrics 十一 集群验证 11.1 创建busybox 11.2 用pod解析默认命名空间中的kubernetes 11.3 跨命名空间是否可以解析 11.4 每个节点都必须要能访问Kubernetes的kubernetes svc 443和kube-dns的service 53 11.5 Pod和Pod之前要能通 十二 安装 Helm 十三 安装k8tz时间同步 十四 安装 ingress 或者 Gateway API 控制器 十五 kubectl 自动补全

一、环境说明

1.1 系统与组件版本

系统版本:Rocky Linux release 10.1 (Red Quartz) 内核版本:6.12.0-124.8.1.el10_1.x86_64 K8S版本:v1.35.0 containerd版本:v2.2.1 CNI版本:1.9.0 crictl 版本:1.35.0 etcd版本:3.6.7

离线安装包:链接: https://pan.baidu.com/s/19CjX1ImiwQTWqDleWiBwgg提取码: 8888

kubeadm 安装教程请查看这个链接:Rocky Linux+kubeadm实现1.35.0高可用架构深度实践

1.2 网络规划

K8s Service网段:10.96.0.0/12 K8s Pod网段:10.244.0.0/16

二、准备工作

2.1 必备工具安装

# 在所有节点安装常用工具yum -y install wget openssl vim net-tools tar zip unzip iptables lsof 2.2 服务器信息

服务器IP地址不能设置成dhcp,要配置成静态IP,VIP不要和公司内网重复。

192.168.1.11 master01 # 4C4G 40G192.168.1.12 master02 # 4C4G 40G192.168.1.13 master03 # 4C4G 40G192.168.1.100 master-lb # VIP 192.168.1.14 node01192.168.1.15 node022.3 配置所有节点hosts文件

echo'192.168.1.11 master01192.168.1.12 master02192.168.1.13 master03192.168.1.100 master-lb 192.168.1.14 node01192.168.1.15 node02 ' >> /etc/hosts2.4 所有节点关闭firewalld、selinux

systemctl disable --now firewalld setenforce 0sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/sysconfig/selinuxsed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/selinux/config2.5 所有节点关闭swap分区

swapoff -a# 永久禁用,注释/etc/fstab中的swap行sed -i.bak '/swap/s/^/#/' /etc/fstab2.6 所有节点同步时间

dnf install -y ntpd# 或使用chronydsystemctl status chronyd2.7 所有节点配置limit

echo"* soft nofile 65536" >> /etc/security/limits.confecho"* hard nofile 65536" >> /etc/security/limits.confecho"* soft nproc 65536" >> /etc/security/limits.confecho"* hard nproc 65536" >> /etc/security/limits.confecho"* soft memlock unlimited" >> /etc/security/limits.confecho"* hard memlock unlimited" >> /etc/security/limits.conf2.8 Master01节点免密钥登录其他节点

ssh-keygen -t rsa # 一路回车for i in master01 master02 master03 node01 node02; do ssh-copy-id -i .ssh/id_rsa.pub $i; done2.9 所有节点安装ipvsadm

yum install ipvsadm ipset sysstat conntrack libseccomp -y# 所有节点配置ipvs模块modprobe -- ip_vsmodprobe -- ip_vs_rrmodprobe -- ip_vs_wrrmodprobe -- ip_vs_shmodprobe -- nf_conntrack2.10 所有节点设置ipvs开机自启动

echo'ip_vsip_vs_lcip_vs_wlcip_vs_rrip_vs_wrrip_vs_lblcip_vs_lblcrip_vs_dhip_vs_ship_vs_foip_vs_nqip_vs_sedip_vs_ftpip_vs_shnf_conntrackip_tablesip_setxt_setipt_setipt_rpfilteript_REJECTipip ' > /etc/modules-load.d/ipvs.confsystemctl enable --now systemd-modules-load.service检查是否加载

lsmod | grep -e ip_vs -e nf_conntrack2.11 配置所有节点内核参数

cat <<EOF > /etc/sysctl.d/k8s.conf## 网络优化 启用 IPv4 数据包转发 CNI 网络插件如 Calico/Cilium 依赖net.ipv4.ip_forward = 1net.ipv4.tcp_tw_reuse = 2net.ipv4.tcp_timestamps = 1net.ipv4.tcp_fin_timeout = 30net.ipv4.conf.all.route_localnet = 1net.ipv4.tcp_max_tw_buckets = 36000net.ipv4.tcp_max_orphans = 327680net.ipv4.tcp_orphan_retries = 3net.ipv4.tcp_syncookies = 1net.ipv4.ip_conntrack_max = 65536net.core.somaxconn = 65535net.core.netdev_max_backlog = 65536# 增加 SYN 半连接队列长度net.ipv4.tcp_max_syn_backlog = 65536net.ipv4.tcp_rmem = 4096 12582912 16777216net.ipv4.tcp_wmem = 4096 12582912 16777216net.netfilter.nf_conntrack_max = 1048576net.ipv4.tcp_keepalive_time = 600net.ipv4.tcp_keepalive_intvl = 30net.ipv4.tcp_keepalive_probes = 10# 文件系统fs.file-max = 2097152fs.nr_open = 52706963fs.may_detach_mounts = 1fs.inotify.max_user_instances = 8192fs.inotify.max_user_watches = 524288# 内存管理vm.swappiness = 0vm.max_map_count = 262144vm.overcommit_memory = 1vm.panic_on_oom = 0kernel.panic = 10# 容器支持kernel.pid_max = 4194304net.bridge.bridge-nf-call-iptables = 1net.bridge.bridge-nf-call-ip6tables = 1net.bridge.bridge-nf-call-arptables = 1# Kubernetes 要求net.ipv4.conf.all.rp_filter = 0net.ipv4.conf.default.rp_filter = 0kernel.softlockup_panic = 1EOFsysctl --system2.12 查看所有节点内核加载情况

lsmod | grep --color=auto -e ip_vs -e nf_conntrack三、证书生成

cat > bootstrap.yaml << EOF apiVersion: v1kind: Secretmetadata: name: bootstrap-token-c8ad9c namespace: kube-systemtype: bootstrap.kubernetes.io/tokenstringData: description: "The default bootstrap token generated by 'kubelet '." token-id: c8ad9c token-secret: 2e4d610cf3e7426e usage-bootstrap-authentication: "true" usage-bootstrap-signing: "true" auth-extra-groups: system:bootstrappers:default-node-token,system:bootstrappers:worker,system:bootstrappers:ingress---apiVersion: rbac.authorization.k8s.io/v1kind: ClusterRoleBindingmetadata: name: kubelet-bootstraproleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: system:node-bootstrappersubjects:- apiGroup: rbac.authorization.k8s.io kind: Group name: system:bootstrappers:default-node-token---apiVersion: rbac.authorization.k8s.io/v1kind: ClusterRoleBindingmetadata: name: node-autoapprove-bootstraproleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: system:certificates.k8s.io:certificatesigningrequests:nodeclientsubjects:- apiGroup: rbac.authorization.k8s.io kind: Group name: system:bootstrappers:default-node-token---apiVersion: rbac.authorization.k8s.io/v1kind: ClusterRoleBindingmetadata: name: node-autoapprove-certificate-rotationroleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: system:certificates.k8s.io:certificatesigningrequests:selfnodeclientsubjects:- apiGroup: rbac.authorization.k8s.io kind: Group name: system:nodes---apiVersion: rbac.authorization.k8s.io/v1kind: ClusterRolemetadata: annotations: rbac.authorization.kubernetes.io/autoupdate: "true" labels: kubernetes.io/bootstrapping: rbac-defaults name: system:kube-apiserver-to-kubeletrules: - apiGroups: - "" resources: - nodes/proxy - nodes/stats - nodes/log - nodes/spec - nodes/metrics verbs: - "*"---apiVersion: rbac.authorization.k8s.io/v1kind: ClusterRoleBindingmetadata: name: system:kube-apiserver namespace: ""roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: system:kube-apiserver-to-kubeletsubjects: - apiGroup: rbac.authorization.k8s.io kind: User name: kube-apiserverEOFmkdir pki && cd pkicat > admin-csr.json << EOF {"CN": "admin","key": {"algo": "rsa","size": 2048 },"names": [ {"C": "CN","ST": "Beijing","L": "Beijing","O": "system:masters","OU": "Kubernetes-manual" } ]}EOFcat > apiserver-csr.json << EOF {"CN": "kube-apiserver","key": {"algo": "rsa","size": 2048 },"names": [ {"C": "CN","ST": "Beijing","L": "Beijing","O": "Kubernetes","OU": "Kubernetes-manual" } ]}EOFcat > ca-config.json << EOF {"signing": {"default": {"expiry": "876000h" },"profiles": {"kubernetes": {"usages": ["signing","key encipherment","server auth","client auth" ],"expiry": "876000h" } } }}EOFcat > ca-csr.json << EOF {"CN": "kubernetes","key": {"algo": "rsa","size": 2048 },"names": [ {"C": "CN","ST": "Beijing","L": "Beijing","O": "Kubernetes","OU": "Kubernetes-manual" } ],"ca": {"expiry": "876000h" }}EOFcat > etcd-ca-csr.json << EOF {"CN": "etcd","key": {"algo": "rsa","size": 2048 },"names": [ {"C": "CN","ST": "Beijing","L": "Beijing","O": "etcd","OU": "Etcd Security" } ],"ca": {"expiry": "876000h" }}EOFcat > etcd-csr.json << EOF {"CN": "etcd","key": {"algo": "rsa","size": 2048 },"names": [ {"C": "CN","ST": "Beijing","L": "Beijing","O": "etcd","OU": "Etcd Security" } ]}EOFcat > front-proxy-ca-csr.json << EOF {"CN": "kubernetes","key": {"algo": "rsa","size": 2048 }}EOFcat > front-proxy-client-csr.json << EOF {"CN": "front-proxy-client","key": {"algo": "rsa","size": 2048 }}EOFcat > kubelet-csr.json << EOF {"CN": "system:node:\$NODE","key": {"algo": "rsa","size": 2048 },"names": [ {"C": "CN","L": "Beijing","ST": "Beijing","O": "system:nodes","OU": "Kubernetes-manual" } ]}EOFcat > kube-proxy-csr.json << EOF {"CN": "system:kube-proxy","key": {"algo": "rsa","size": 2048 },"names": [ {"C": "CN","ST": "Beijing","L": "Beijing","O": "system:kube-proxy","OU": "Kubernetes-manual" } ]}EOFcat > manager-csr.json << EOF {"CN": "system:kube-controller-manager","key": {"algo": "rsa","size": 2048 },"names": [ {"C": "CN","ST": "Beijing","L": "Beijing","O": "system:kube-controller-manager","OU": "Kubernetes-manual" } ]}EOFcat > scheduler-csr.json << EOF {"CN": "system:kube-scheduler","key": {"algo": "rsa","size": 2048 },"names": [ {"C": "CN","ST": "Beijing","L": "Beijing","O": "system:kube-scheduler","OU": "Kubernetes-manual" } ]}EOF四、安装容器

4.1 配置内核参数

转发 IPv4 并让 iptables 看到桥接流量

cat <<EOF | sudo tee /etc/modules-load.d/k8s.confoverlaybr_netfilterEOFsudo modprobe overlaysudo modprobe br_netfilter# 应用 sysctl 参数而不重新启动sudo sysctl --system确认 br_netfilter 和 overlay 模块被加载:

lsmod | grep br_netfilterlsmod | grep overlay确认相关系统变量设置:

sysctl net.bridge.bridge-nf-call-iptables net.bridge.bridge-nf-call-ip6tables net.ipv4.ip_forward4.2 安装containerd

wget https://github.com/containerd/containerd/releases/download/v2.2.1/containerd-2.2.1-linux-amd64.tar.gztar xvf containerd-2.2.1-linux-amd64.tar.gzmv bin/* /usr/local/bin/mkdir /etc/containerdcontainerd config default > /etc/containerd/config.toml4.3 通过 systemd 启动 containerd

cat > /usr/lib/systemd/system/containerd.service <<EOF[Unit]Description=containerd container runtimeDocumentation=https://containerd.ioAfter=network.target dbus.service[Service]ExecStartPre=-/sbin/modprobe overlayExecStart=/usr/local/bin/containerdType=notifyDelegate=yesKillMode=processRestart=alwaysRestartSec=5LimitNPROC=infinityLimitCORE=infinityTasksMax=infinityOOMScoreAdjust=-999[Install]WantedBy=multi-user.targetEOFsystemctl daemon-reloadsystemctl enable --now containerd4.4 安装runc

下载地址:https://github.com/opencontainers/runc/releases/download/v1.4.0/runc.amd64

install -m 755 runc.amd64 /usr/local/sbin/runc4.5 安装CNI插件

下载地址:https://github.com/containernetworking/plugins/releases/download/v1.9.0/cni-plugins-linux-amd64-v1.9.0.tgz

mkdir -p /opt/cni/bintar Cxzvf /opt/cni/bin cni-plugins-linux-amd64-v1.9.0.tgz4.6 安装crictl

下载地址:https://github.com/kubernetes-sigs/cri-tools/releases/download/v1.35.0/crictl-v1.35.0-linux-amd64.tar.gz

tar -xf crictl-v1.35.0-linux-amd64.tar.gz -C /usr/local/bincat > /etc/crictl.yaml <<EOFruntime-endpoint: unix:///var/run/containerd/containerd.sockimage-endpoint: unix:///var/run/containerd/containerd.socktimeout: 30debug: falsepull-image-on-create: falseEOF4.7 配置 systemd cgroup 驱动

cgroup 详细介绍请查看 官方文档编辑 /etc/containerd/config.toml 中对应部分:

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc] ShimCgroup = '' # 在这行下面添加 SystemdCgroup = true # 默认是没有这行的重启 containerd:

systemctl restart containerd五、高可用组件安装

注意:如果不是高可用集群,haproxy和keepalived无需安装。 如果在云上安装也无需执行此章节的步骤,可以直接使用云上的lb,比如阿里云slb,腾讯云elb等。

5.1 安装HAProxy和KeepAlived

dnf -y install keepalived haproxy5.2 所有Master节点配置HAProxy

cat > /etc/haproxy/haproxy.cfg << EOFglobal maxconn 2000ulimit-n 16384log 127.0.0.1 local0 err stats timeout 30sdefaultslog global mode http option httplog timeout connect 5000 timeout client 50000 timeout server 50000 timeout http-request 15s timeout http-keep-alive 15sfrontend k8s-masterbind 0.0.0.0:8443 mode tcp option tcplog tcp-request inspect-delay 5s default_backend k8s-masterbackend k8s-master option httpchk GET /healthz http-check expect status 200 mode tcp option tcplog option tcp-check balance roundrobin default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100 server master01 192.168.1.11:6443 check server master02 192.168.1.12:6443 check server master03 192.168.1.13:6443 checkEOF5.3 配置KeepAlived

5.3.1 Master01节点的配置

cat > /etc/keepalived/keepalived.conf << EOF! Configuration File for keepalivedglobal_defs { router_id LVS_DEVEL}vrrp_script chk_apiserver { script "/etc/keepalived/check_apiserver.sh" interval 5 weight -5 fall 2 rise 1}vrrp_instance VI_1 { state MASTER interface ens32 mcast_src_ip 192.168.1.11 virtual_router_id 51 priority 100 nopreempt advert_int 2 authentication { auth_type PASS auth_pass K8SHA_KA_AUTH } virtual_ipaddress { 192.168.1.100 } track_script { chk_apiserver } }EOF5.3.2 Master02节点的配置

cat > /etc/keepalived/keepalived.conf << EOF! Configuration File for keepalivedglobal_defs { router_id LVS_DEVEL}vrrp_script chk_apiserver { script "/etc/keepalived/check_apiserver.sh" interval 5 weight -5 fall 2 rise 1}vrrp_instance VI_1 { state BACKUP interface ens32 mcast_src_ip 192.168.1.12 virtual_router_id 51 priority 99 nopreempt advert_int 2 authentication { auth_type PASS auth_pass K8SHA_KA_AUTH } virtual_ipaddress { 192.168.1.100 } track_script { chk_apiserver } }EOF5.3.3 Master03节点的配置

cat > /etc/keepalived/keepalived.conf << EOF! Configuration File for keepalivedglobal_defs { router_id LVS_DEVEL}vrrp_script chk_apiserver { script "/etc/keepalived/check_apiserver.sh" interval 5 weight -5 fall 2 rise 1}vrrp_instance VI_1 { state BACKUP interface ens32 mcast_src_ip 192.168.1.13 virtual_router_id 51 priority 98 nopreempt advert_int 2 authentication { auth_type PASS auth_pass K8SHA_KA_AUTH } virtual_ipaddress { 192.168.1.100 } track_script { chk_apiserver } }EOF5.4 健康检查配置

cat > /etc/keepalived/check_apiserver.sh << EOF#!/bin/basherr=0for k in $(seq 1 3)do check_code=$(pgrep haproxy)if [[ $check_code == "" ]]; then err=$(expr $err + 1) sleep 1continueelse err=0breakfidoneif [[ $err != "0" ]]; thenecho"systemctl stop keepalived" /usr/bin/systemctl stop keepalivedexit 1elseexit 0fiEOFchmod +x /etc/keepalived/check_apiserver.sh5.5 所有master节点启动haproxy和keepalived

systemctl daemon-reloadsystemctl enable --now haproxysystemctl enable --now keepalivedsystemctl status haproxy keepalived5.6 VIP测试

ping 192.168.1.100六、K8S和etcd证书配置

6.1 下载安装包

etcd安装包:https://github.com/etcd-io/etcd/releases/download/v3.6.7/etcd-v3.6.7-linux-amd64.tar.gz K8S1.34.1安装包:https://dl.k8s.io/v1.35.0/kubernetes-server-linux-amd64.tar.gz

注意:下载好以后将这两个安装包拷贝到master01即可

查看所需要的镜像版本:

registry.k8s.io/kube-apiserver:v1.35.0registry.k8s.io/kube-controller-manager:v1.35.0registry.k8s.io/kube-scheduler:v1.35.0registry.k8s.io/kube-proxy:v1.35.0registry.k8s.io/coredns/coredns:v1.13.1registry.k8s.io/pause:3.10.1registry.k8s.io/etcd:3.6.6-0这个命令输出的镜像版本是3.6.6,这个再下载镜像的时候报错加上 -0 就好了 估计以后就没问题了

6.2 安装etcd

tar -zxvf etcd-v3.6.7-linux-amd64.tar.gz --strip-components=1 -C /usr/local/bin etcd-v3.6.7-linux-amd64/etcd{,ctl}6.3 安装K8S组件

tar -xf kubernetes-server-linux-amd64.tar.gz --strip-components=3 -C /usr/local/bin kubernetes/server/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy}6.4 查看版本

kubelet --versionetcdctl version# 发送组件到其他节点MasterNodes='master02 master03'WorkNodes='node01 node02'for NODE in$MasterNodes; doecho$NODE; scp /usr/local/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy} $NODE:/usr/local/bin/; scp /usr/local/bin/etcd* $NODE:/usr/local/bin/; donefor NODE in$WorkNodes; do scp /usr/local/bin/kube{let,-proxy} $NODE:/usr/local/bin/ ; done6.5 安装证书生成工具

# master01节点下载证书生成工具wget "https://github.com/cloudflare/cfssl/releases/download/v1.6.4/cfssl_1.6.4_linux_amd64" -O /usr/local/bin/cfsslwget "https://github.com/cloudflare/cfssl/releases/download/v1.6.4/cfssljson_1.6.4_linux_amd64" -O /usr/local/bin/cfssljson# 或使用本地包cp cfssl /usr/local/bin/cfsslcp cfssljson /usr/local/bin/cfssljsonchmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson6.6 生成证书

cd pki# 所有节点创建kubernetes相关目录mkdir -p /etc/etcd/sslmkdir -p /etc/kubernetes/pki6.6.1 生成etcd CA证书和CA证书的key

cfssl gencert -initca etcd-ca-csr.json | cfssljson -bare /etc/etcd/ssl/etcd-cacfssl gencert \ -ca=/etc/etcd/ssl/etcd-ca.pem \ -ca-key=/etc/etcd/ssl/etcd-ca-key.pem \ -config=ca-config.json \ -hostname=127.0.0.1,master01,master02,master03,192.168.1.11,192.168.1.12,192.168.1.13 \ -profile=kubernetes \ etcd-csr.json | cfssljson -bare /etc/etcd/ssl/etcd发送证书到其他节点

MasterNodes='master02 master03'for NODE in$MasterNodes; do ssh $NODE"mkdir -p /etc/etcd/ssl"for FILE in etcd-ca-key.pem etcd-ca.pem etcd-key.pem etcd.pem; do scp /etc/etcd/ssl/${FILE}$NODE:/etc/etcd/ssl/${FILE}donedone6.6.2 生成 k8s 组件证书

master01生成kubernetes证书

10.96.0.1是k8s service的网段,如果说需要更改k8s service网段,那就需要更改10.96.0.1, 如果不是高可用集群,192.168.1.100为 master01 的IP

cfssl gencert -initca ca-csr.json | cfssljson -bare /etc/kubernetes/pki/cacfssl gencert -ca=/etc/kubernetes/pki/ca.pem -ca-key=/etc/kubernetes/pki/ca-key.pem -config=ca-config.json -hostname=10.96.0.1,192.168.1.100,127.0.0.1,kubernetes,kubernetes.default,kubernetes.default.svc,kubernetes.default.svc.cluster,kubernetes.default.svc.cluster.local,192.168.1.11,192.168.1.12,192.168.1.13 -profile=kubernetes apiserver-csr.json | cfssljson -bare /etc/kubernetes/pki/apiserver生成apiserver的聚合证书。Requestheader-client-xxx requestheader-allowwd-xxx:aggerator

cfssl gencert -initca front-proxy-ca-csr.json | cfssljson -bare /etc/kubernetes/pki/front-proxy-ca cfssl gencert -ca=/etc/kubernetes/pki/front-proxy-ca.pem -ca-key=/etc/kubernetes/pki/front-proxy-ca-key.pem -config=ca-config.json -profile=kubernetes front-proxy-client-csr.json | cfssljson -bare /etc/kubernetes/pki/front-proxy-client返回结果(忽略警告)

生成controller-manage的证书

cfssl gencert \ -ca=/etc/kubernetes/pki/ca.pem \ -ca-key=/etc/kubernetes/pki/ca-key.pem \ -config=ca-config.json \ -profile=kubernetes \ manager-csr.json | cfssljson -bare /etc/kubernetes/pki/controller-manager注意:如果不是高可用集群,192.168.1.100:8443 改为 master01 的地址,8443改为 apiserver 的端口,默认是 6443

set-cluster:设置一个集群项

kubectl config set-cluster kubernetes \ --certificate-authority=/etc/kubernetes/pki/ca.pem \ --embed-certs=true \ --server=https://192.168.1.100:8443 \ --kubeconfig=/etc/kubernetes/controller-manager.kubeconfig设置一个环境项,一个上下文

kubectl config set-context system:kube-controller-manager@kubernetes \ --cluster=kubernetes \ --user=system:kube-controller-manager \ --kubeconfig=/etc/kubernetes/controller-manager.kubeconfigset-credentials 设置一个用户项

kubectl config set-credentials system:kube-controller-manager \ --client-certificate=/etc/kubernetes/pki/controller-manager.pem \ --client-key=/etc/kubernetes/pki/controller-manager-key.pem \ --embed-certs=true \ --kubeconfig=/etc/kubernetes/controller-manager.kubeconfig使用某个环境当做默认环境

kubectl config use-context system:kube-controller-manager@kubernetes \ --kubeconfig=/etc/kubernetes/controller-manager.kubeconfigcfssl gencert \ -ca=/etc/kubernetes/pki/ca.pem \ -ca-key=/etc/kubernetes/pki/ca-key.pem \ -config=ca-config.json \ -profile=kubernetes \ scheduler-csr.json | cfssljson -bare /etc/kubernetes/pki/scheduler注意,如果不是高可用集群,192.168.1.100:8443改为master01的地址,8443改为apiserver的端口,默认是6443

kubectl config set-cluster kubernetes \ --certificate-authority=/etc/kubernetes/pki/ca.pem \ --embed-certs=true \ --server=https://192.168.1.100:8443 \ --kubeconfig=/etc/kubernetes/scheduler.kubeconfigkubectl config set-credentials system:kube-scheduler \ --client-certificate=/etc/kubernetes/pki/scheduler.pem \ --client-key=/etc/kubernetes/pki/scheduler-key.pem \ --embed-certs=true \ --kubeconfig=/etc/kubernetes/scheduler.kubeconfigkubectl config set-context system:kube-scheduler@kubernetes \ --cluster=kubernetes \ --user=system:kube-scheduler \ --kubeconfig=/etc/kubernetes/scheduler.kubeconfigkubectl config use-context system:kube-scheduler@kubernetes \ --kubeconfig=/etc/kubernetes/scheduler.kubeconfigcfssl gencert \ -ca=/etc/kubernetes/pki/ca.pem \ -ca-key=/etc/kubernetes/pki/ca-key.pem \ -config=ca-config.json \ -profile=kubernetes \ admin-csr.json | cfssljson -bare /etc/kubernetes/pki/admin注意:如果不是高可用集群,192.168.1.100:8443改为master01的地址,8443改为apiserver的端口,默认是6443

kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/pki/ca.pem --embed-certs=true --server=https://192.168.1.100:8443 --kubeconfig=/etc/kubernetes/admin.kubeconfigkubectl config set-credentials kubernetes-admin --client-certificate=/etc/kubernetes/pki/admin.pem --client-key=/etc/kubernetes/pki/admin-key.pem --embed-certs=true --kubeconfig=/etc/kubernetes/admin.kubeconfigkubectl config set-context kubernetes-admin@kubernetes --cluster=kubernetes --user=kubernetes-admin --kubeconfig=/etc/kubernetes/admin.kubeconfigkubectl config use-context kubernetes-admin@kubernetes --kubeconfig=/etc/kubernetes/admin.kubeconfig创建 ServiceAccount Key -- secret

openssl genrsa -out /etc/kubernetes/pki/sa.key 2048openssl rsa -in /etc/kubernetes/pki/sa.key -pubout -out /etc/kubernetes/pki/sa.pub创建kube-proxy证书

注意,如果不是高可用集群,192.168.1.100:8443改为master01的地址,8443改为apiserver的端口,默认是6443

cfssl gencert \ -ca=/etc/kubernetes/pki/ca.pem \ -ca-key=/etc/kubernetes/pki/ca-key.pem \ -config=ca-config.json \ -profile=kubernetes \ kube-proxy-csr.json | cfssljson -bare /etc/kubernetes/pki/kube-proxy# 若不是高可用集群 这个参数修改为master节点ip `--server=https://192.168.1.100:8443`kubectl config set-cluster kubernetes \ --certificate-authority=/etc/kubernetes/pki/ca.pem \ --embed-certs=true \ --server=https://192.168.1.100:8443 \ --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfigkubectl config set-credentials kube-proxy \ --client-certificate=/etc/kubernetes/pki/kube-proxy.pem \ --client-key=/etc/kubernetes/pki/kube-proxy-key.pem \ --embed-certs=true \ --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfigkubectl config set-context kube-proxy@kubernetes \ --cluster=kubernetes \ --user=kube-proxy \ --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfigkubectl config use-context kube-proxy@kubernetes --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig发送证书至其他节点

for NODE in master02 master03;dofor FILE in $(ls /etc/kubernetes/pki | grep -v etcd); do scp /etc/kubernetes/pki/${FILE}$NODE:/etc/kubernetes/pki/${FILE};done; for FILE in admin.kubeconfig controller-manager.kubeconfig scheduler.kubeconfig; do scp /etc/kubernetes/${FILE}$NODE:/etc/kubernetes/${FILE};done;done26个证书就没问题

ls /etc/kubernetes/pki/|wc -l七 Kubernetes系统组件配置

7.1 etcd 配置

master01 配置

cat > /etc/etcd/etcd.config.yml << EOFname: 'master01'data-dir: /var/lib/etcdwal-dir: /var/lib/etcd/walsnapshot-count: 5000heartbeat-interval: 100election-timeout: 1000quota-backend-bytes: 0listen-peer-urls: 'https://192.168.1.11:2380'listen-client-urls: 'https://192.168.1.11:2379,http://127.0.0.1:2379'max-snapshots: 3max-wals: 5cors:initial-advertise-peer-urls: 'https://192.168.1.11:2380'advertise-client-urls: 'https://192.168.1.11:2379'discovery:discovery-fallback: 'proxy'discovery-proxy:discovery-srv:initial-cluster: 'master01=https://192.168.1.11:2380,master02=https://192.168.1.12:2380,master03=https://192.168.1.13:2380'initial-cluster-token: 'etcd-k8s-cluster'initial-cluster-state: 'new'strict-reconfig-check: falseenable-v2: trueenable-pprof: trueproxy: 'off'proxy-failure-wait: 5000proxy-refresh-interval: 30000proxy-dial-timeout: 1000proxy-write-timeout: 5000proxy-read-timeout: 0client-transport-security: cert-file: '/etc/kubernetes/pki/etcd/etcd.pem' key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem' client-cert-auth: true trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem' auto-tls: truepeer-transport-security: cert-file: '/etc/kubernetes/pki/etcd/etcd.pem' key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem' peer-client-cert-auth: true trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem' auto-tls: truedebug: falselog-package-levels:log-outputs: [default]force-new-cluster: falseEOFmaster02 配置

cat > /etc/etcd/etcd.config.yml << EOFname: 'master02'data-dir: /var/lib/etcdwal-dir: /var/lib/etcd/walsnapshot-count: 5000heartbeat-interval: 100election-timeout: 1000quota-backend-bytes: 0listen-peer-urls: 'https://192.168.1.12:2380'listen-client-urls: 'https://192.168.1.12:2379,http://127.0.0.1:2379'max-snapshots: 3max-wals: 5cors:initial-advertise-peer-urls: 'https://192.168.1.12:2380'advertise-client-urls: 'https://192.168.1.12:2379'discovery:discovery-fallback: 'proxy'discovery-proxy:discovery-srv:initial-cluster: 'master01=https://192.168.1.11:2380,master02=https://192.168.1.12:2380,master03=https://192.168.1.13:2380'initial-cluster-token: 'etcd-k8s-cluster'initial-cluster-state: 'new'strict-reconfig-check: falseenable-v2: trueenable-pprof: trueproxy: 'off'proxy-failure-wait: 5000proxy-refresh-interval: 30000proxy-dial-timeout: 1000proxy-write-timeout: 5000proxy-read-timeout: 0client-transport-security: cert-file: '/etc/kubernetes/pki/etcd/etcd.pem' key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem' client-cert-auth: true trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem' auto-tls: truepeer-transport-security: cert-file: '/etc/kubernetes/pki/etcd/etcd.pem' key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem' peer-client-cert-auth: true trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem' auto-tls: truedebug: falselog-package-levels:log-outputs: [default]force-new-cluster: falseEOFmaster03 配置

cat > /etc/etcd/etcd.config.yml << EOFname: 'master03'data-dir: /var/lib/etcdwal-dir: /var/lib/etcd/walsnapshot-count: 5000heartbeat-interval: 100election-timeout: 1000quota-backend-bytes: 0listen-peer-urls: 'https://192.168.1.13:2380'listen-client-urls: 'https://192.168.1.13:2379,http://127.0.0.1:2379'max-snapshots: 3max-wals: 5cors:initial-advertise-peer-urls: 'https://192.168.1.13:2380'advertise-client-urls: 'https://192.168.1.13:2379'discovery:discovery-fallback: 'proxy'discovery-proxy:discovery-srv:initial-cluster: 'master01=https://192.168.1.11:2380,master02=https://192.168.1.12:2380,master03=https://192.168.1.13:2380'initial-cluster-token: 'etcd-k8s-cluster'initial-cluster-state: 'new'strict-reconfig-check: falseenable-v2: trueenable-pprof: trueproxy: 'off'proxy-failure-wait: 5000proxy-refresh-interval: 30000proxy-dial-timeout: 1000proxy-write-timeout: 5000proxy-read-timeout: 0client-transport-security: cert-file: '/etc/kubernetes/pki/etcd/etcd.pem' key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem' client-cert-auth: true trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem' auto-tls: truepeer-transport-security: cert-file: '/etc/kubernetes/pki/etcd/etcd.pem' key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem' peer-client-cert-auth: true trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem' auto-tls: truedebug: falselog-package-levels:log-outputs: [default]force-new-cluster: falseEOF7.2 创建 etcd 启动文件

所有master节点创建etcd service并启动

cat > /usr/lib/systemd/system/etcd.service << EOF[Unit]Description=Etcd ServiceDocumentation=https://coreos.com/etcd/docs/latest/After=network.target[Service]Type=notifyExecStart=/usr/local/bin/etcd --config-file=/etc/etcd/etcd.config.ymlRestart=on-failureRestartSec=10LimitNOFILE=65536[Install]WantedBy=multi-user.targetAlias=etcd3.serviceEOF所有 master 节点创建etcd的证书目录

mkdir /etc/kubernetes/pki/etcdln -s /etc/etcd/ssl/* /etc/kubernetes/pki/etcd/systemctl daemon-reloadsystemctl enable --now etcd查看 etcd 状态

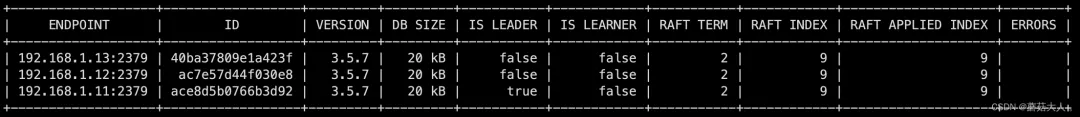

etcdctl --endpoints="192.168.1.13:2379,192.168.1.12:2379,192.168.1.11:2379" \--cacert=/etc/kubernetes/pki/etcd/etcd-ca.pem \--cert=/etc/kubernetes/pki/etcd/etcd.pem \--key=/etc/kubernetes/pki/etcd/etcd-key.pem endpoint status -w table输出如下

7.3 所有节点创建相关目录

mkdir -p /etc/kubernetes/manifests/ /etc/systemd/system/kubelet.service.d /var/lib/kubelet /var/log/kubernetes7.4 配置 Apiserver 启动文件

注意本文档使用的k8s service网段为10.96.0.0/12,该网段不能和宿主机的网段、Pod网段的重复,请按需修改

master01 配置

cat > /usr/lib/systemd/system/kube-apiserver.service << EOF[Unit]Description=Kubernetes API ServerDocumentation=https://github.com/kubernetes/kubernetesAfter=network.target[Service]ExecStart=/usr/local/bin/kube-apiserver \\ --v=2 \\ --allow-privileged=true \\ --bind-address=0.0.0.0 \\ --secure-port=6443 \\ --advertise-address=192.168.1.11 \ --service-cluster-ip-range=10.96.0.0/12 \\ --service-node-port-range=30000-32767 \\ --etcd-servers=https://192.168.1.11:2379,https://192.168.1.12:2379,https://192.168.1.13:2379 \\ --etcd-cafile=/etc/etcd/ssl/etcd-ca.pem \\ --etcd-certfile=/etc/etcd/ssl/etcd.pem \\ --etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \\ --client-ca-file=/etc/kubernetes/pki/ca.pem \\ --tls-cert-file=/etc/kubernetes/pki/apiserver.pem \\ --tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \\ --kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \\ --kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \\ --service-account-key-file=/etc/kubernetes/pki/sa.pub \\ --service-account-signing-key-file=/etc/kubernetes/pki/sa.key \\ --service-account-issuer=https://kubernetes.default.svc.cluster.local \\ --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \\ --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \\ --authorization-mode=Node,RBAC \\ --enable-bootstrap-token-auth=true \\ --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \\ --proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.pem \\ --proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client-key.pem \\ --requestheader-allowed-names=aggregator \\ --requestheader-group-headers=X-Remote-Group \\ --requestheader-extra-headers-prefix=X-Remote-Extra- \\ --requestheader-username-headers=X-Remote-User \\ --enable-aggregator-routing=true# --token-auth-file=/etc/kubernetes/token.csvRestart=on-failureRestartSec=10sLimitNOFILE=65535[Install]WantedBy=multi-user.targetEOFmaster02配置

cat > /usr/lib/systemd/system/kube-apiserver.service << EOF[Unit]Description=Kubernetes API ServerDocumentation=https://github.com/kubernetes/kubernetesAfter=network.target[Service]ExecStart=/usr/local/bin/kube-apiserver \\ --v=2 \\ --allow-privileged=true \\ --bind-address=0.0.0.0 \\ --secure-port=6443 \\ --advertise-address=192.168.1.12 \\ --service-cluster-ip-range=10.96.0.0/12 \\ --service-node-port-range=30000-32767 \\ --etcd-servers=https://192.168.1.11:2379,https://192.168.1.12:2379,https://192.168.1.13:2379 \\ --etcd-cafile=/etc/etcd/ssl/etcd-ca.pem \\ --etcd-certfile=/etc/etcd/ssl/etcd.pem \\ --etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \\ --client-ca-file=/etc/kubernetes/pki/ca.pem \\ --tls-cert-file=/etc/kubernetes/pki/apiserver.pem \\ --tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \\ --kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \\ --kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \\ --service-account-key-file=/etc/kubernetes/pki/sa.pub \\ --service-account-signing-key-file=/etc/kubernetes/pki/sa.key \\ --service-account-issuer=https://kubernetes.default.svc.cluster.local \\ --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \\ --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \\ --authorization-mode=Node,RBAC \\ --enable-bootstrap-token-auth=true \\ --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \\ --proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.pem \\ --proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client-key.pem \\ --requestheader-allowed-names=aggregator \\ --requestheader-group-headers=X-Remote-Group \\ --requestheader-extra-headers-prefix=X-Remote-Extra- \\ --requestheader-username-headers=X-Remote-User \\ --enable-aggregator-routing=true# --token-auth-file=/etc/kubernetes/token.csvRestart=on-failureRestartSec=10sLimitNOFILE=65535[Install]WantedBy=multi-user.targetEOFmaster03配置

cat > /usr/lib/systemd/system/kube-apiserver.service << EOF[Unit]Description=Kubernetes API ServerDocumentation=https://github.com/kubernetes/kubernetesAfter=network.target[Service]ExecStart=/usr/local/bin/kube-apiserver \\ --v=2 \\ --allow-privileged=true \\ --bind-address=0.0.0.0 \\ --secure-port=6443 \\ --advertise-address=192.168.1.13 \\ --service-cluster-ip-range=10.96.0.0/12 \\ --service-node-port-range=30000-32767 \\ --etcd-servers=https://192.168.1.11:2379,https://192.168.1.12:2379,https://192.168.1.13:2379 \ --etcd-cafile=/etc/etcd/ssl/etcd-ca.pem \\ --etcd-certfile=/etc/etcd/ssl/etcd.pem \\ --etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \\ --client-ca-file=/etc/kubernetes/pki/ca.pem \\ --tls-cert-file=/etc/kubernetes/pki/apiserver.pem \\ --tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \\ --kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \\ --kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \\ --service-account-key-file=/etc/kubernetes/pki/sa.pub \\ --service-account-signing-key-file=/etc/kubernetes/pki/sa.key \\ --service-account-issuer=https://kubernetes.default.svc.cluster.local \\ --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \\ --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \\ --authorization-mode=Node,RBAC \\ --enable-bootstrap-token-auth=true \\ --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \\ --proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.pem \\ --proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client-key.pem \\ --requestheader-allowed-names=aggregator \\ --requestheader-group-headers=X-Remote-Group \\ --requestheader-extra-headers-prefix=X-Remote-Extra- \\ --requestheader-username-headers=X-Remote-User \\ --enable-aggregator-routing=true# --token-auth-file=/etc/kubernetes/token.csvRestart=on-failureRestartSec=10sLimitNOFILE=65535[Install]WantedBy=multi-user.targetEOF7.5 启动apiserver

所有Master节点开启kube-apiserver

systemctl daemon-reload systemctl enable --now kube-apiserversystemctl status kube-apiserver7.6 配置kube-controller-manager

所有Master节点配置kube-controller-manager

注意本文档使用的k8s Pod网段为10.244.0.0/16,该网段不能和宿主机的网段、k8s Service网段的重复,请按需修改

cat > /usr/lib/systemd/system/kube-controller-manager.service << EOF[Unit]Description=Kubernetes Controller ManagerDocumentation=https://github.com/kubernetes/kubernetesAfter=network.target[Service]ExecStart=/usr/local/bin/kube-controller-manager \\ --v=2 \\ --bind-address=127.0.0.1 \\ --root-ca-file=/etc/kubernetes/pki/ca.pem \\ --cluster-signing-cert-file=/etc/kubernetes/pki/ca.pem \\ --cluster-signing-key-file=/etc/kubernetes/pki/ca-key.pem \\ --service-account-private-key-file=/etc/kubernetes/pki/sa.key \\ --kubeconfig=/etc/kubernetes/controller-manager.kubeconfig \\ --leader-elect=true \\ --use-service-account-credentials=true \\ --node-monitor-grace-period=40s \\ --node-monitor-period=5s \\ --node-eviction-rate=0.1 \\ --controllers=*,bootstrapsigner,tokencleaner \\ --allocate-node-cidrs=true \\ --cluster-cidr=10.244.0.0/16 \\ --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \\ --node-cidr-mask-size=24 \\ --node-eviction-rate=0.1Restart=alwaysRestartSec=10s[Install]WantedBy=multi-user.targetEOF7.7 所有Master节点启动kube-controller-manager

systemctl daemon-reloadsystemctl enable --now kube-controller-managersystemctl status kube-controller-manager7.8 配置kube-scheduler

所有Master节点配置kube-scheduler service

cat > /usr/lib/systemd/system/kube-scheduler.service << EOF[Unit]Description=Kubernetes SchedulerDocumentation=https://github.com/kubernetes/kubernetesAfter=network.target[Service]ExecStart=/usr/local/bin/kube-scheduler \\ --v=2 \\ --bind-address=127.0.0.1 \\ --leader-elect=true \\ --kubeconfig=/etc/kubernetes/scheduler.kubeconfigRestart=alwaysRestartSec=10s[Install]WantedBy=multi-user.targetEOF启动服务

systemctl daemon-reloadsystemctl enable --now kube-schedulersystemctl status kube-scheduler7.9 TLS Bootstrapping配置

Bootstrap 的作用:

自动为 kubelet 生成客户端证书和密钥,用于访问 API 服务器。 实现 kubelet 证书的自动轮转和过期管理。 简化证书管理,避免手动为每个节点生成证书。

在Master01创建bootstrap

注意,如果不是高可用集群,192.168.1.100:8443改为master01的地址,8443改为apiserver的端口,默认是6443

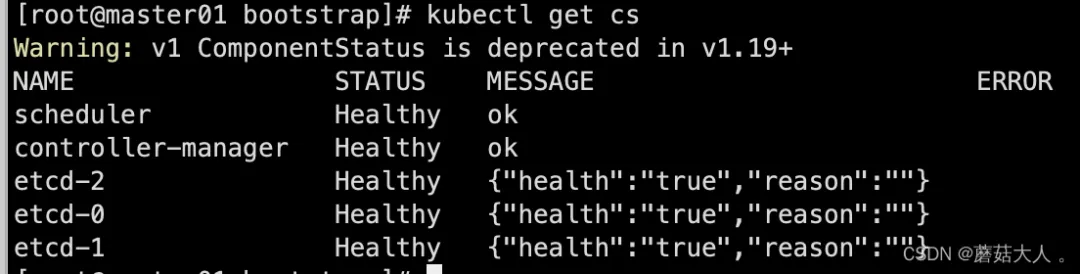

cd pkikubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/pki/ca.pem --embed-certs=true --server=https://192.168.1.100:8443 --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfigkubectl config set-credentials tls-bootstrap-token-user --token=c8ad9c.2e4d610cf3e7426e --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfigkubectl config set-context tls-bootstrap-token-user@kubernetes --cluster=kubernetes --user=tls-bootstrap-token-user --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfigkubectl config use-context tls-bootstrap-token-user@kubernetes --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfigmkdir -p /root/.kube ; cp /etc/kubernetes/admin.kubeconfig /root/.kube/configkubectl create -f bootstrap.yaml 查看集群状态,没问题的话继续后续操作

kubectl get cs

7.10 在master01上将证书复制到node节点

cd /etc/kubernetes/for NODE in master02 master03 node01 node02; do ssh $NODE mkdir -p /etc/kubernetes/pki /etc/etcd/ssl /etc/etcd/sslfor FILE in etcd-ca.pem etcd.pem etcd-key.pem; do scp /etc/etcd/ssl/$FILE$NODE:/etc/etcd/ssl/donefor FILE in pki/ca.pem pki/ca-key.pem pki/front-proxy-ca.pem bootstrap-kubelet.kubeconfig; do scp /etc/kubernetes/$FILE$NODE:/etc/kubernetes/${FILE}donedone7.11 kubelet配置

7.11.1 使用Containerd作为Runtime

所有节点创建相关目录

mkdir -p /var/lib/kubelet /var/log/kubernetes /etc/systemd/system/kubelet.service.d /etc/kubernetes/manifests/导入镜像

ctr -n k8s.io i import registry.k8s.io-pause-3.10.1.tar7.11.2 所有k8s节点创建 kubelet service

cat > /usr/lib/systemd/system/kubelet.service << EOF[Unit]Description=Kubernetes KubeletDocumentation=https://github.com/kubernetes/kubernetesAfter=containerd.serviceRequires=containerd.service[Service]ExecStart=/usr/local/bin/kubelet \\ --bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig \\ --kubeconfig=/etc/kubernetes/kubelet.kubeconfig \\ --config=/etc/kubernetes/kubelet-conf.yml \\ --container-runtime-endpoint=unix:///run/containerd/containerd.sock \\ --node-labels=node.kubernetes.io/node= [Install]WantedBy=multi-user.targetEOF7.11 3 所有k8s节点创建kubelet的配置文件

cat > /etc/kubernetes/kubelet-conf.yml << EOFapiVersion: kubelet.config.k8s.io/v1beta1kind: KubeletConfigurationaddress: 0.0.0.0port: 10250readOnlyPort: 10255authentication: anonymous: enabled: false webhook: cacheTTL: 2m0s enabled: true x509: clientCAFile: /etc/kubernetes/pki/ca.pemauthorization: mode: Webhook webhook: cacheAuthorizedTTL: 5m0s cacheUnauthorizedTTL: 30scgroupDriver: systemdcgroupsPerQOS: trueclusterDNS:- 10.96.0.10clusterDomain: cluster.localcontainerLogMaxFiles: 5containerLogMaxSize: 10MicontentType: application/vnd.kubernetes.protobufcpuCFSQuota: truecpuManagerPolicy: nonecpuManagerReconcilePeriod: 10senableControllerAttachDetach: trueenableDebuggingHandlers: trueenforceNodeAllocatable:- podseventBurst: 10eventRecordQPS: 5evictionHard: imagefs.available: 15% memory.available: 100Mi nodefs.available: 10% nodefs.inodesFree: 5%evictionPressureTransitionPeriod: 5m0sfailSwapOn: truefileCheckFrequency: 20shairpinMode: promiscuous-bridgehealthzBindAddress: 127.0.0.1healthzPort: 10248httpCheckFrequency: 20simageGCHighThresholdPercent: 85imageGCLowThresholdPercent: 80imageMinimumGCAge: 2m0siptablesDropBit: 15iptablesMasqueradeBit: 14kubeAPIBurst: 10kubeAPIQPS: 5makeIPTablesUtilChains: truemaxOpenFiles: 1000000maxPods: 110nodeStatusUpdateFrequency: 10soomScoreAdj: -999podPidsLimit: -1registryBurst: 10registryPullQPS: 5resolvConf: /etc/resolv.confrotateCertificates: trueruntimeRequestTimeout: 2m0sserializeImagePulls: truestaticPodPath: /etc/kubernetes/manifestsstreamingConnectionIdleTimeout: 4h0m0ssyncFrequency: 1m0svolumeStatsAggPeriod: 1m0sEOF7.11.4 启动kubelet

systemctl daemon-reloadsystemctl enable --now kubeletsystemctl status kubelet查看集群

kubectl get node NAME STATUS ROLES AGE VERSIONmaster01 NotReady <none> 26m v1.34.1master02 NotReady <none> 12m v1.34.1master03 NotReady <none> 12m v1.34.1node01 NotReady <none> 12m v1.34.1node02 NotReady <none> 12m v1.34.17.12 所有k8s节点添加kube-proxy的service文件

cat > /usr/lib/systemd/system/kube-proxy.service << EOF[Unit]Description=Kubernetes Kube ProxyDocumentation=https://github.com/kubernetes/kubernetesAfter=network.target[Service]ExecStart=/usr/local/bin/kube-proxy \\ --config=/etc/kubernetes/kube-proxy.yaml \\ --v=2Restart=alwaysRestartSec=10s[Install]WantedBy=multi-user.targetEOF7.13 所有k8s节点添加 kube-proxy 的配置

cat > /etc/kubernetes/kube-proxy.yaml << EOFapiVersion: kubeproxy.config.k8s.io/v1alpha1bindAddress: 0.0.0.0clientConnection: acceptContentTypes: "" burst: 10 contentType: application/vnd.kubernetes.protobuf kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig qps: 5clusterCIDR: 10.244.0.0/16configSyncPeriod: 15m0sconntrack: max: null maxPerCore: 32768 min: 131072 tcpCloseWaitTimeout: 1h0m0s tcpEstablishedTimeout: 24h0m0senableProfiling: falsehealthzBindAddress: 0.0.0.0:10256hostnameOverride: ""iptables: masqueradeAll: false masqueradeBit: 14 minSyncPeriod: 0s syncPeriod: 30sipvs: masqueradeAll: true minSyncPeriod: 5s scheduler: "rr" syncPeriod: 30skind: KubeProxyConfigurationmetricsBindAddress: 127.0.0.1:10249mode: "ipvs"nodePortAddresses: nulloomScoreAdj: -999portRange: ""udpIdleTimeout: 250msEOF7.14 启动 kube-proxy

## 同步证书for i in master02 master03 node01 node02;do scp /etc/kubernetes/kube-proxy.kubeconfig $i:/etc/kubernetes/kube-proxy.kubeconfig;donesystemctl daemon-reloadsystemctl enable --now kube-proxysystemctl status kube-proxy八 安装Calico

下载地址:https://github.com/projectcalico/calico/blob/v3.30.3/manifests/calico-etcd.yaml

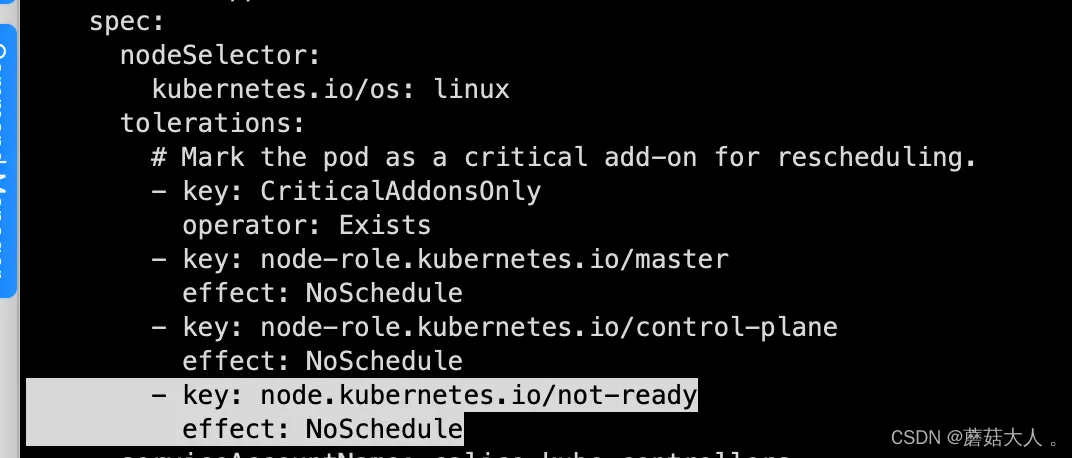

如果遇到部署失败 查看一下是否污点配置的问题 calico 部署失败,提示有taint (calico 部署失败的话taint 是删除不了的)修改calico-etcd.yaml ,这个文件里面自带的配置和现有集群不匹配,在tolerations 最下面直接添加即可

8.1 修改calico

sed -i 's#etcd_endpoints: "http://<ETCD_IP>:<ETCD_PORT>"#etcd_endpoints: "https://192.168.1.11:2379,https://192.168.1.12:2379,https://192.168.1.13:2379"#g' calico-etcd.yamlETCD_CA=`cat /etc/kubernetes/pki/etcd/etcd-ca.pem | base64 | tr -d '\n'`ETCD_CERT=`cat /etc/kubernetes/pki/etcd/etcd.pem | base64 | tr -d '\n'`ETCD_KEY=`cat /etc/kubernetes/pki/etcd/etcd-key.pem | base64 | tr -d '\n'`sed -i "s@# etcd-key: null@etcd-key: ${ETCD_KEY}@g; s@# etcd-cert: null@etcd-cert: ${ETCD_CERT}@g; s@# etcd-ca: null@etcd-ca: ${ETCD_CA}@g" calico-etcd.yamlsed -i 's#etcd_ca: ""#etcd_ca: "/calico-secrets/etcd-ca"#g; s#etcd_cert: ""#etcd_cert: "/calico-secrets/etcd-cert"#g; s#etcd_key: "" #etcd_key: "/calico-secrets/etcd-key" #g' calico-etcd.yaml# 更改此处为自己的pod网段POD_SUBNET="10.244.0.0/16"sed -i 's@# - name: CALICO_IPV4POOL_CIDR@- name: CALICO_IPV4POOL_CIDR@g; s@# value: "192.168.0.0/16"@ value: '"${POD_SUBNET}"'@g' calico-etcd.yaml8.2 部署 calico

kubectl apply -f calico-etcd.yaml部署好以后查看状态

kubectl get po -n kube-system九 安装CoreDNS

cat > coredns.yaml << EOFapiVersion: v1kind: ServiceAccountmetadata: name: coredns namespace: kube-system---apiVersion: rbac.authorization.k8s.io/v1kind: ClusterRolemetadata: labels: kubernetes.io/bootstrapping: rbac-defaults name: system:corednsrules: - apiGroups: - "" resources: - endpoints - services - pods - namespaces verbs: - list - watch - apiGroups: - discovery.k8s.io resources: - endpointslices verbs: - list - watch---apiVersion: rbac.authorization.k8s.io/v1kind: ClusterRoleBindingmetadata: annotations: rbac.authorization.kubernetes.io/autoupdate: "true" labels: kubernetes.io/bootstrapping: rbac-defaults name: system:corednsroleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: system:corednssubjects:- kind: ServiceAccount name: coredns namespace: kube-system---apiVersion: v1kind: ConfigMapmetadata: name: coredns namespace: kube-systemdata: Corefile: | .:53 { errors health { lameduck 5s } ready kubernetes cluster.local in-addr.arpa ip6.arpa { fallthrough in-addr.arpa ip6.arpa } prometheus :9153 forward . /etc/resolv.conf { max_concurrent 1000 } cache 30 loop reload loadbalance }---apiVersion: apps/v1kind: Deploymentmetadata: name: coredns namespace: kube-system labels: k8s-app: kube-dns kubernetes.io/name: "CoreDNS"spec:# replicas: not specified here:# 1. Default is 1.# 2. Will be tuned in real time if DNS horizontal auto-scaling is turned on. strategy:type: RollingUpdate rollingUpdate: maxUnavailable: 1 selector: matchLabels: k8s-app: kube-dns template: metadata: labels: k8s-app: kube-dns spec: priorityClassName: system-cluster-critical serviceAccountName: coredns tolerations: - key: "CriticalAddonsOnly" operator: "Exists" nodeSelector: kubernetes.io/os: linux affinity: podAntiAffinity: preferredDuringSchedulingIgnoredDuringExecution: - weight: 100 podAffinityTerm: labelSelector: matchExpressions: - key: k8s-app operator: In values: ["kube-dns"] topologyKey: kubernetes.io/hostname containers: - name: coredns image: registry.k8s.io/coredns/coredns:v1.13.1 imagePullPolicy: IfNotPresent resources: limits: memory: 170Mi requests: cpu: 100m memory: 70Mi args: [ "-conf", "/etc/coredns/Corefile" ] volumeMounts: - name: config-volume mountPath: /etc/coredns readOnly: true ports: - containerPort: 53 name: dns protocol: UDP - containerPort: 53 name: dns-tcp protocol: TCP - containerPort: 9153 name: metrics protocol: TCP securityContext: allowPrivilegeEscalation: false capabilities: add: - NET_BIND_SERVICE drop: - all readOnlyRootFilesystem: true livenessProbe: httpGet: path: /health port: 8080 scheme: HTTP initialDelaySeconds: 60 timeoutSeconds: 5 successThreshold: 1 failureThreshold: 5 readinessProbe: httpGet: path: /ready port: 8181 scheme: HTTP dnsPolicy: Default volumes: - name: config-volume configMap: name: coredns items: - key: Corefile path: Corefile---apiVersion: v1kind: Servicemetadata: name: kube-dns namespace: kube-system annotations: prometheus.io/port: "9153" prometheus.io/scrape: "true" labels: k8s-app: kube-dns kubernetes.io/cluster-service: "true" kubernetes.io/name: "CoreDNS"spec: selector: k8s-app: kube-dns clusterIP: 10.96.0.10 ports: - name: dns port: 53 protocol: UDP - name: dns-tcp port: 53 protocol: TCP - name: metrics port: 9153 protocol: TCPEOF十 部署 Metrics

这是官方配置文件,直接拿来用会提示缺少证书:https://github.com/kubernetes-sigs/metrics-server/releases/latest/download/components.yaml

以下为修改添加证书相关路径添加挂在点等等 证书文件路径为/etc/kubernetes/pki/front-proxy-ca.crt(部署集群时自动生成的证书) 在安装Metrics

## 注意 这个是老版本 如果需要新版本去 githup 上面看看cat > ./components.yaml << EapiVersion: v1kind: ServiceAccountmetadata: labels: k8s-app: metrics-server name: metrics-server namespace: kube-system---apiVersion: rbac.authorization.k8s.io/v1kind: ClusterRolemetadata: labels: k8s-app: metrics-server rbac.authorization.k8s.io/aggregate-to-admin: "true" rbac.authorization.k8s.io/aggregate-to-edit: "true" rbac.authorization.k8s.io/aggregate-to-view: "true" name: system:aggregated-metrics-readerrules:- apiGroups: - metrics.k8s.io resources: - pods - nodes verbs: - get - list - watch---apiVersion: rbac.authorization.k8s.io/v1kind: ClusterRolemetadata: labels: k8s-app: metrics-server name: system:metrics-serverrules:- apiGroups: - "" resources: - nodes/metrics verbs: - get- apiGroups: - "" resources: - pods - nodes verbs: - get - list - watch---apiVersion: rbac.authorization.k8s.io/v1kind: RoleBindingmetadata: labels: k8s-app: metrics-server name: metrics-server-auth-reader namespace: kube-systemroleRef: apiGroup: rbac.authorization.k8s.io kind: Role name: extension-apiserver-authentication-readersubjects:- kind: ServiceAccount name: metrics-server namespace: kube-system---apiVersion: rbac.authorization.k8s.io/v1kind: ClusterRoleBindingmetadata: labels: k8s-app: metrics-server name: metrics-server:system:auth-delegatorroleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: system:auth-delegatorsubjects:- kind: ServiceAccount name: metrics-server namespace: kube-system---apiVersion: rbac.authorization.k8s.io/v1kind: ClusterRoleBindingmetadata: labels: k8s-app: metrics-server name: system:metrics-serverroleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: system:metrics-serversubjects:- kind: ServiceAccount name: metrics-server namespace: kube-system---apiVersion: v1kind: Servicemetadata: labels: k8s-app: metrics-server name: metrics-server namespace: kube-systemspec: ports: - name: https port: 443 protocol: TCP targetPort: https selector: k8s-app: metrics-server---apiVersion: apps/v1kind: Deploymentmetadata: labels: k8s-app: metrics-server name: metrics-server namespace: kube-systemspec: selector: matchLabels: k8s-app: metrics-server strategy: rollingUpdate: maxUnavailable: 0 template: metadata: labels: k8s-app: metrics-server spec: containers: - args: - --cert-dir=/tmp - --secure-port=4443 - --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname - --kubelet-use-node-status-port - --metric-resolution=15s - --kubelet-insecure-tls - --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem - --requestheader-username-headers=X-Remote-User - --requestheader-group-headers=X-Remote-Group - --requestheader-extra-headers-prefix=X-Remote-Extra- image: registry.k8s.io/metrics-server/metrics-server:v0.6.3 imagePullPolicy: IfNotPresent livenessProbe: failureThreshold: 3 httpGet: path: /livez port: https scheme: HTTPS periodSeconds: 10 name: metrics-server ports: - containerPort: 4443 name: https protocol: TCP readinessProbe: failureThreshold: 3 httpGet: path: /readyz port: https scheme: HTTPS initialDelaySeconds: 20 periodSeconds: 10 resources: requests: cpu: 100m memory: 200Mi securityContext: allowPrivilegeEscalation: false readOnlyRootFilesystem: true runAsNonRoot: true runAsUser: 1000 volumeMounts: - mountPath: /tmp name: tmp-dir - mountPath: /etc/kubernetes/pki name: k8s-certs nodeSelector: kubernetes.io/os: linux priorityClassName: system-cluster-critical serviceAccountName: metrics-server volumes: - emptyDir: {} name: tmp-dir - hostPath: path: /etc/kubernetes/pki name: k8s-certs---apiVersion: apiregistration.k8s.io/v1kind: APIServicemetadata: labels: k8s-app: metrics-server name: v1beta1.metrics.k8s.iospec: group: metrics.k8s.io groupPriorityMinimum: 100 insecureSkipTLSVerify: true service: name: metrics-server namespace: kube-system version: v1beta1 versionPriority: 100Ekubectl create -f components.yaml查看状态

kubectl get po -n kube-systemkube-system metrics-server-595f65d8d5-tcxkz 1/1 Running 4 277d

十一 集群验证

这个集群验证有更详细的,再kubeadm 那个文档。

11.1 创建busybox

cat<<EOF | kubectl apply -f -apiVersion: v1kind: Podmetadata: name: busybox namespace: defaultspec: containers: - name: busybox image: docker.io/library/busybox:1.28command: - sleep - "3600" imagePullPolicy: IfNotPresent restartPolicy: AlwaysEOF# 查看kubectl get podNAME READY STATUS RESTARTS AGEbusybox 1/1 Running 0 17s11.2 用pod解析默认命名空间中的kubernetes

kubectl get svc# NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE# kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 17hkubectl exec busybox -n default -- nslookup kubernetes# 3Server: 10.96.0.10# Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local# Name: kubernetes# Address 1: 10.96.0.1 kubernetes.default.svc.cluster.local11.3 跨命名空间是否可以解析

kubectl exec busybox -n default -- nslookup kube-dns.kube-system# Server: 10.96.0.10# Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local# Name: kube-dns.kube-system# Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local11.4 每个节点都必须要能访问Kubernetes的kubernetes svc 443和kube-dns的service 53

telnet 10.96.0.1 443# Trying 10.96.0.1...# Connected to 10.96.0.1.# Escape character is '^]'.telnet 10.96.0.10 53# Trying 10.96.0.10...# Connected to 10.96.0.10.# Escape character is '^]'.curl 10.96.0.10:53# curl: (52) Empty reply from server11.5 Pod和Pod之前要能通

[root@master01 ~]# kubectl get po -A -owideNAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATESdefault busybox 1/1 Running 0 10m 172.20.59.193 master02 <none> <none>kube-system calico-kube-controllers-56d77c98f4-nfrhl 1/1 Running 0 22m 192.168.1.156 master01 <none> <none>kube-system calico-node-9297q 1/1 Running 0 22m 192.168.1.66 node01 <none> <none>kube-system calico-node-cs955 1/1 Running 0 22m 192.168.1.119 node04 <none> <none>kube-system calico-node-d6j8d 1/1 Running 0 22m 192.168.1.80 node03 <none> <none>kube-system calico-node-dg68l 1/1 Running 0 22m 192.168.1.156 master01 <none> <none>kube-system calico-node-dpq9j 1/1 Running 0 22m 192.168.1.9 node02 <none> <none>kube-system calico-node-h5gqh 1/1 Running 0 22m 192.168.1.229 master03 <none> <none>kube-system calico-node-qngs7 1/1 Running 0 22m 192.168.1.148 master02 <none> <none>kube-system coredns-6574fb7bb7-lb9jj 1/1 Running 0 14m 172.21.231.129 node02 <none> <none>kube-system metrics-server-9cbc97fd5-n6tph 1/1 Running 0 12m 172.18.71.1 master03 <none> <none># 进入busybox ping其他节点上的podkubectl exec -ti busybox -- sh/ # ping 3.7.191.64PING 3.7.191.64 (3.7.191.64): 56 data bytes64 bytes from 3.7.191.64: seq=0 ttl=63 time=0.358 ms64 bytes from 3.7.191.64: seq=1 ttl=63 time=0.668 ms64 bytes from 3.7.191.64: seq=2 ttl=63 time=0.637 ms64 bytes from 3.7.191.64: seq=3 ttl=63 time=0.624 ms64 bytes from 3.7.191.64: seq=4 ttl=63 time=0.907 ms到这基本安装完成,下面的插件都是老版本,如有需要可以直接去官方安装新版本。

十二 安装 Helm

十三 安装k8tz时间同步

注意:这个插件对有些服务有影响可能导致服务启动失败githup地址开始安装

helm repo add k8tz https://k8tz.github.io/k8tz/helm install k8tz k8tz/k8tz --set timezone=Asia/Shanghai使用注释为特定命名空间或 pod 设置不同的时区

kubectl annotate namespace special-namespace k8tz.io/timezone=UTC十四 安装 ingress 或者 Gateway API 控制器

十五 kubectl 自动补全

yum -y install bash-completionsource /usr/share/bash-completion/bash_completionsource <(kubectl completion bash)echo"source <(kubectl completion bash)" >> ~/.bashrc#加载bash-completionsource /etc/profile.d/bash_completion.sh 你还可以在补全时为 kubectl 使用一个速记别名

echo'alias k=kubectlcomplete -o default -F __start_kubectl k ' >> ~/.bashrcsource ~/.bashrc到此安装全部完,如有安装问题或者文档有误请私信我!